We’re building more than just better video over here at Mux. We’re also building the teams, systems, and culture that help power online video for developers everywhere. Over the last year, we’ve invested heavily in our analytics function at Mux, and we are excited to share a bit about that journey and what we’ve learned along the way.

Building an analytics team

Before I joined Mux, the company was focused on product-led growth with a fairly slim footprint and team. Analytics was incredibly scrappy, with a sturdy foundation of data and a few folks who knew how to work with the available systems to get the data they needed for basic insights and reporting. That scrappy team included a data engineer, an analytics engineer, and a data architect. While there is a “walked into a bar” joke to clearly be made here, this was the right group to get things started for us at Mux. Our architect is largely responsible for data modeling and internal systems integrations with our data lake, our data engineer is responsible for all 3rd-party systems integrations with our data lake, and our analytics engineer is responsible for the data warehouse operations more generally (including getting dbt up and running).

As we neared 100 employees and another fundraising round, it was time to build out an analytics function that could facilitate decision making and optimization for the next stage of growth—which is when I came in. My job was to build a team of people who would care about all that data and know how to put it to use. It wasn’t always easy, but we got there. Below, I’ll walk through 3 steps that helped us hire an awesome team of business analysts and data scientists.

Specialize and trade

Which roles you hire for first should depend a lot on what you’ve already got on the table and what you’re missing. Considering the fungibility of your team’s tools and skill sets will ultimately help you answer an important question: Could a generalist do what we need?

There are 2 general analytical ecosystems that every company needs to be aware of: marketing analytics and core product/onboarding analytics. While these domains are obviously learnable, they’re rife with their own increasingly specialized toolkits, jargon, and challenges. The right team needs experimentation chops and hard skills built through on-the-ground experience. For Mux, the right team at this stage had to be built with specialized expertise.

I had no such expertise. When our leadership asked for a cost-of-acquisition analysis on different ad campaigns with a last-touch attribution model, I was terrified—but the request helped me prioritize the first roles to hire on my team:

- Marketing analytics manager

- Product analytics manager

- Product analytics specialist

Now we just had to find those folks!

Hiring is hard; get help

Let me first say: what a good time to be a talented analyst on the job hunt. You know SQL? Can string together 3 sentences in a row? Heard of a T-test? When can you start? The corollary to that is: woof, what a tough time to be an analytics leader or hiring manager at a growth-stage startup.

There were weeks upon weeks of pounding LinkedIn. I had some great conversations with candidates, but more often than not, I heard crickets on the other side of my light-digital-employment-stalking. And then—eureka—it happened: we hired a dedicated business recruiter who was immediately tasked with helping her helpless colleague (ahem, me) build the team.

This recruiting partner identified candidates through sophisticated LinkedIn searches, set up email-based candidate campaigns, tested language in those campaigns, screened candidates, and held all the follow-up conversations. Within a month, we had a humming pipeline and were starting to push offers out to multiple analysts. Without that support, I’m sure I would still be silently screaming into the LinkedIn void.

Closing the deal

With offers out, we still had to convince these folks to join the team. Given the hot market, we were prepared for candidates to press hard or take competing offers. While there are myriad factors a candidate might consider in their decision, we took 3 steps that I think helped our candidates say yes:

- Be authentic: The key to convincing a candidate to join the team is to understand their hopes, dreams, motivators, and demotivators. Starting from that position, it’s easier for the candidate to understand how the role and opportunity can fit those goals; if there’s not a match, then it’s best for both sides to shut the door rather than go through it.

- Be concrete: We shared clear and specific projects that the analysts would have to tackle after they ramped up, including the core problem statements that we wanted to solve (e.g., setting up a lead scoring framework and model and implementing it with go-to-market teams, customer-level profitability modeling, and cost-of-acquisition and lifetime-value modeling).

- Be transparent: We tried to be explicit with each candidate about what the day-to-day work would actually entail. This meant highlighting that despite having an analytics dev team, they would have to do a significant amount of up-front work on foundational data and reporting until we could transition to more “pure” strategic analytics in the coming months. (Note: I still can’t stress enough how important it is to have a dedicated analytics engineer so analysts can focus on their core competencies!)

Building an analytics stack

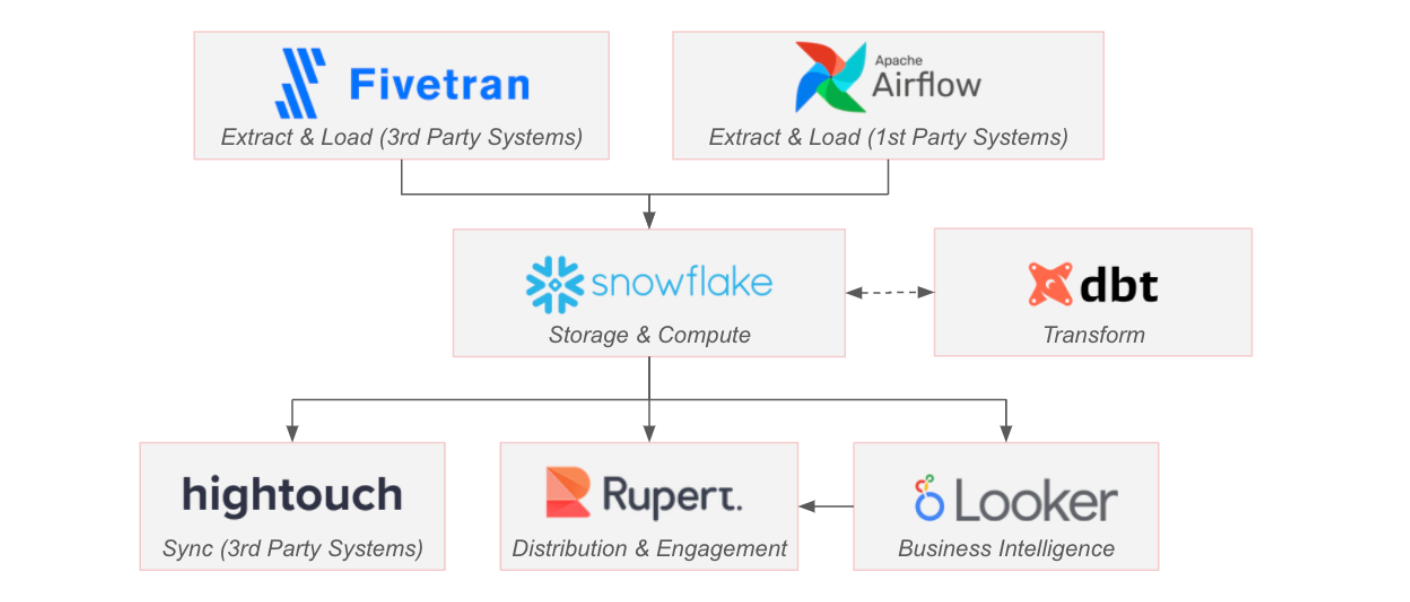

In parallel with building out the business analytics team, our first task was to set up analytics infrastructure that could scale with the business and its ever-evolving needs. While we already had a robust Airflow infrastructure to load internal product data into a database of our choice (and had previously been using Metabase on Amazon RDS), we had a lot of optionality in building out the rest of the ecosystem.

In retrospect, I’m lucky that the team took a chance on a BigTech alum like myself; after nearly 8 years working in the analytics space at Google, I knew very little about the increasingly specialized and diverse set of players who contribute to the modern analytics stack for the rest of the world. Fortunately, we had a lot going for us:

- The fragmented constellation of providers has an increasingly clear set of winners (e.g., dbt) that serve as the nucleus of a mature stack.

- Beyond that nucleus, there is a whole opt-in solar system of purpose-built tools that offer plug-and-play functionality with the core providers.

- The cross-tool/service set of integrations (e.g., one-click Salesforce to Snowflake integrations) made our big-data dreams attainable even with a small team.

- The broader analytics community is incredibly welcoming and full of key opinion formers who live and breathe this world, actively sharing opinions on Medium, over Slack, at industry conferences, and beyond.

The modern data stack @Mux

And so, we got to work. We spent time with software providers and other coaches, learned about the ecosystem, evaluated providers relative to our needs (and budget!), and started quickly implementing and integrating services.

While we are continually evaluating other services (data ops, data catalogues, metrics layers, etc.), our modern stack is coming into focus with an ecosystem built around dbt, Snowflake, and Looker. This nucleus lets us manage our data model, permissions, and documentation and ultimately allows us to get data into end-users’ hands, with an emphasis on self-service exploration. Orbiting around the nucleus, we also have Fivetran and Hightouch, which help us seamlessly move data into and out of third-party systems.

Finally, we are very excited about our work with Rupert. Rupert will help us get the most out of all this investment by making business intelligence, analytics, and data more approachable for our business stakeholders.

It’s a big world out there

Of course, there’s also a whole ecosystem of analytics-adjacent systems that plug into this world (e.g., data enrichment services, marketing and funnel analytics tracking tools, experimentation platforms, etc., etc., etc.)—and of course, there’s a lot more that goes into the success of a stack beyond signing a contract!

We could (and maybe we will?) share other sagas about our data model (e.g., implementing SCD2 tracking and a star schema around core entities), our development ethos between LookML & dbt, our dashboarding principles (ink:information), and LookML best practices…but we’ll save those stories for another day.

Building an analytics culture

So we’ve got big data, cool tools, and fun dashboards. Whoopty doo. All that work is vanity if you don’t run the last mile: using all that data to actually make better decisions as an organization. Building a culture around data is the real job of an analytics leader, and that means getting decision makers to look at the dashboard you built for them; it means injecting data into operational workflows for go-to-market teams; and it means understanding what success and failure look like.

Like our work on the data warehouse and business intelligence layers, our efforts to build a data-driven culture will never be finished—but we have a few principles and projects we are tackling in 2022 that will go a long way to help us scale:

- Make data a habit: We are standing up a recurring metrics review forum that dives deep into our leading indicators across the business. While this is critical in order for company leaders to have a pulse on the business, it also means that leaders from across the company engage with our analytics infrastructure on a recurring basis and start building that data muscle—an added bonus for our team.

- Inject data into processes and playbooks: Most importantly, our teams are adopting metrics-based OKRs that will serve as a forcing function to measure and manage our team-level priorities and goals; we’re also building data-driven playbooks for go-to-market teams that live in their tools (e.g., Salesforce). Everyone becomes an analyst without even realizing it!

- Push, don’t pull: We’re not waiting for anybody to ask us for data; instead, we’re using Rupert and Looker to make source-of-truth business intelligence assets easily searchable and proactively delivering relevant information to interested parties where they work, communicate, and collaborate (e.g., sales portfolios sent every Monday morning to account executives and sales development reps, top-line metrics pushed to leaders on a weekly basis, configuring rules based alerts).

- (Over)communicate, (over)train, (over)communicate (again): To bring internal users into our world, we lead regular training sessions on our Looker instance, hold office hours weekly, and send weekly Snowflake and Looker feature updates to our users on Slack and by email. We also review our quarterly roadmap (for data infrastructure and analytics) with the leadership team on a quarterly basis to align priorities (which can then be communicated out more broadly).

If we’re able to hit these points, I’m excited to see what our team and our company will look like in a year!

Your thoughts?

If you’re going through a similar journey and want to talk about the steps your organization is taking, find us on social, Locally Optimistic, or dbt slack. And while we’re not hiring for analytics roles right now, there are a ton of exciting open roles across Mux. Join us!