Low-latency streaming is a video stream with a latency around five to twelve seconds. To the viewer, this might feel like a real-time video, there is actually a short delay to deliver the video content to the screen.

Building video is hard, but creating low-latency video has historically been even harder. Because it required hacking together proprietary systems and testing video across players and regions, low-latency streaming was expensive and complex. Today, APIs like the Mux Live Streaming API allow you to easily build interactive live streaming into your applications.

In this comprehensive guide, we’ll cover:

- What is low-latency and low-latency streaming?

- Popular low-latency streaming protocols

- Why should you care about latency?

- 3 examples of low-latency video streaming

- Latency spectrum: Terms, definitions, technologies, and use cases

- What is real-time video streaming?

- Low-latency video streaming vs. Real-time video streaming: What’s the difference?

- Low-latency streaming FAQs

What is low-latency and low-latency streaming?

Latency is essentially a time delay—the length of time between capturing an action with a camera and displaying it on a viewers’ screen. Low-latency streaming refers to a latency delay of around five to twelve seconds, which is low enough for viewers to feel like they’re interacting with a video in real time.

Low-latency industry terms: Glass-to-glass vs. wall-clock time

Before we go into more detail in the rest of this article, let’s define some common industry terms that are often used to describe latency.

- Glass-to-glass latency: Glass-to-glass latency is also sometimes referred to as end-to-end latency. This latency is defined as the time lag between when a camera captures an action and when that action reaches a viewer’s device.

- Wall-clock time: Wall-clock time, also might be referred to as "real-world time.” If you have a clock on the wall where you are capturing video content, this would be the time on that clock.

Think of it this way—wall clock time is the time an action occurs in real life, while glass-to-glass latency is the measurement of time between an action happening in real-life and when it shows up in a video on a viewer’s device.

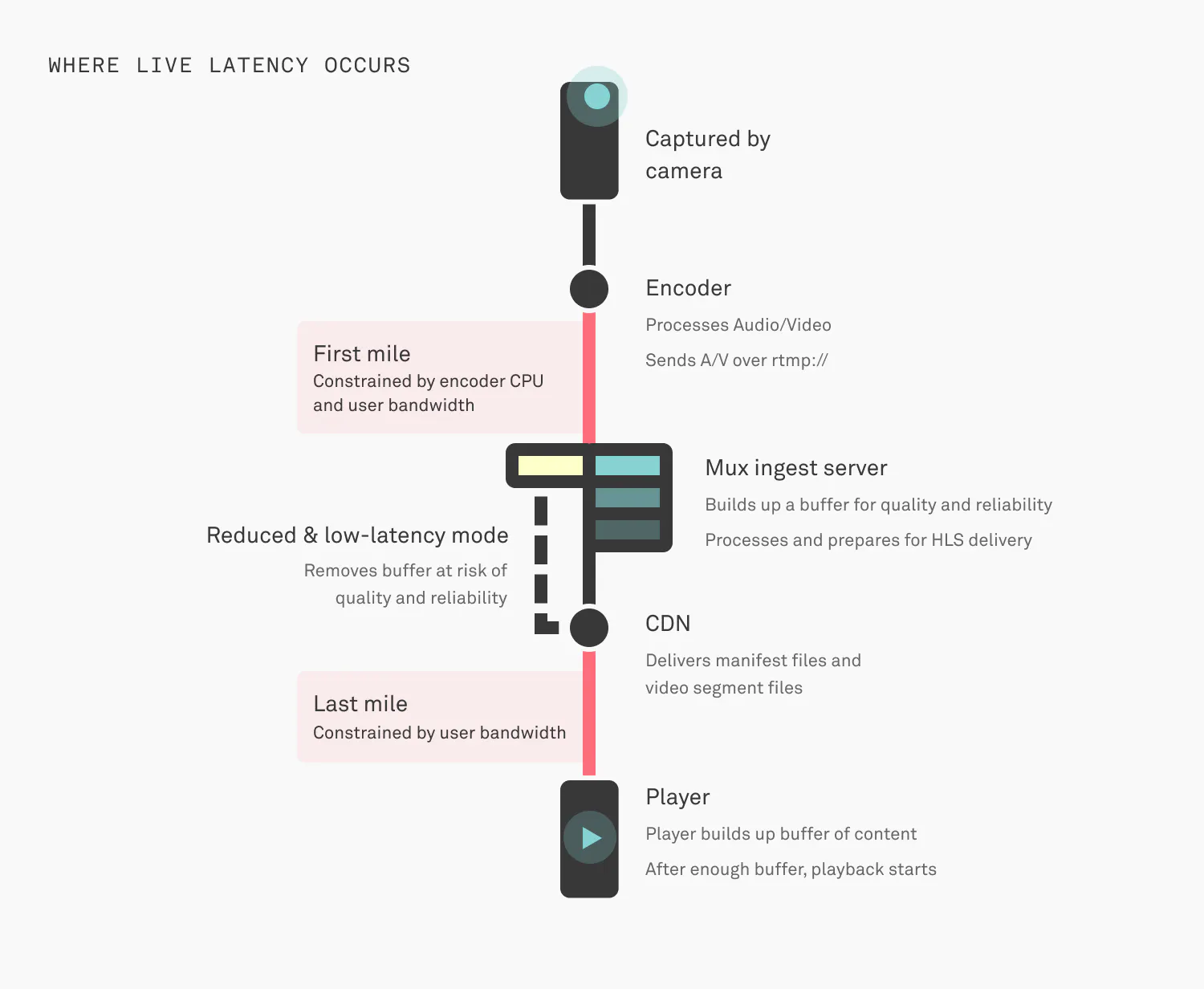

Where does latency on live streams come from?

Latency can happen at any stage of a live stream. To provide more detail, here’s how latency is introduced in each stage of the live stream development process:

- When a live stream is processed by an encoder - If the computer running the encoder is running out of CPU, this process can get behind and start lagging, causing latency.

- When a stream is sent to an RTMP ingest server - This server is ingesting the video content in real-time. This part is called the "first mile.” It's happening over the internet, oftentimes on consumer or cellular network connections, so things like TCP packet-loss and random network disconnects are always happening.

- When ingest server decodes and encodes - Assuming all the content is traveling over the internet fast enough, the encoder on the other end needs to keep up and have enough CPU available to package up segments of video as they come in. The encoder has to ingest video, build up a buffer of content, and then start decoding, processing, and encoding for HLS delivery.

- When manifest files and segments of video are delivered - Files are created and delivered over HTTP through multiple CDNs to reach end users. Each file becomes available after the entire segment's worth of data is ready. This part also happens over the internet where the same risks around packet-loss and network congestion are factors. Network issues are especially a factor for the last mile of delivery to the end user.

When video is decoded and played on the client - When video makes it all the way to the client, the player has to decode and playback the video. Players do not play each segment on the screen as they receive it. They keep a buffer of playable video in-memory which also contributes to the glass-to-glass latency experienced by the end user.

Popular low-latency streaming protocols

The two most popular low-latency streaming protocols are low-latency HLS and low-latency DASH.

Low-latency HLS (LL-HLS)

HLS is probably the most popular streaming protocol because of its stream reliability, particularly for live experiences. HLS originally prioritized stream reliability over latency until Apple released Low-Latency HTTP Live Streaming (LL-HLS), also known as Apple Low Latency HLS (ALHLS). It is now seen as the standard for low-latency live streaming across devices.

According to Apple, LL-HLS “provides a parallel channel for distributing media at the live edge of the Media Playlist, which divides the media into a larger number of smaller files…called HLS Partial Segments.” This means the server can remove these segments from the Media Playlist once they hit a certain threshold, reducing latency.

Low-latency DASH (LL-DASH)

Dash (Dynamic Adaptive Streaming over HTTP) was developed by MPEG. Low-latency DASH (LL-DASH) uses chunked encoding, which means that videos are broken down into individual chunks. Because these chunks aren’t reliant on each other, one chunk can play before another is finished. This reduces latency across the video experience.

Read more about the differences between HLS and Dash

Why should you care about latency? Why low latency is important

Every popular social media platform has a “live” feature nowadays, from Facebook Live to Instagram Live to LinkedIn Live. If you’re familiar with these platforms, you probably understand why interactive video is so popular. It’s engaging, personal, and a powerful way to build online communities.

Latency is important because viewers expect “real-time”—or almost real time—video experiences within their favorite applications. This is especially important for experiences where the audience is interacting with a live stream, like in a live chat or live polling feature. As developers launch new apps, low-latency live-streaming is an essential feature to compete with established platforms.

Read more about examples of low latency and why you should care.

3 examples of low-latency video streaming use cases that can be built with Mux

Low-latency streaming allows brands and creators to build interactive digital experiences. This has transformed how people interact with video on a day-to-day basis. Some examples of low-latency video streaming are:

- Streamed exercise classes: Live exercise classes by brands like Peloton and Tonal have changed the fitness game. Instructors can interact with remote participants, while participants can track their stats and their rank on shared leaderboards.

- Social streaming platforms: Media platforms like Twitch and TikTok Live allow creators to interact with their audiences in real-time with comments and reactions. These platforms also allow use cases like live shopping experiences, without ever needing to leave your home.

Interactive shopping: Live streaming is now becoming synonymous with online shopping. Auction sites like Whatnot have live bidding features where viewers can bid on exclusive items. TikTok uses live shopping ads as part of their TikTok Shop feature, allowing brands to get in front of new consumers with engaging video advertising.

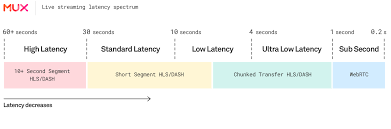

Latency spectrum: Terms, definitions, technologies, and use cases

There is some debate around terminology for different latencies in the live streaming sector. After our research at Mux and inspiration from Will Law’s 2018 talk at IBC Amsterdam, this is how we define the following terms:

High Latency

Latency: Over 30 SecondsTechnologies: HLS, DASH, Smooth - 10+ second segmentsUse Cases: Live streams where latency doesn’t matter

Standard Latency

Latency: 30 to 10 SecondsTechnologies: Short segment HLS, DASH - 6 to 2 second segmentsUse Cases: Simulcast where there’s no desire to be ahead of over-the-air broadcasting

Low Latency

Latency: 10 to 4 SecondsTechnologies: Very short segment HLS, DASH - 2 to 1 second segments OR Chunked Transfer HLS / DASH - LHLS / CMAFUse Cases: UGC, Live experiences, Auctions, Gambling

Ultra-Low Latency

Latency: 4 to 1 SecondsTechnologies: Chunked Transfer HLS / DASH - LHLS / CMAFUse Cases: UGC, Live experiences, Auctions, Gambling

Sub-Second Latency

Latency: Less than 1 secondTechnologies: WebRTC, Proprietary solutionsUse Cases: Video Conferencing, VOIP etc.

What is real-time video streaming?

Real-time video streaming refers to video with sub-second latency (less than one second).

Real-time video streaming is important for contexts where even a small amount of latency (0.2 seconds) can cause big disruptions in the experience—like in video conferencing applications like Zoom, Google Meet, or FaceTime. For this reason, protocols and applications are designed to compromise on video quality to ensure minimal disruptions to video or audio quality.

Low-latency video streaming vs. real-time video streaming: What’s the difference?

While low-latency video streaming generally refers to latency of 10 to 14 seconds, real-time video streaming refers to latency of less than one second.

As you can see in the graphic above, these terms relate to a latency spectrum. Although some people group low-latency, ultra-low latency and sub-second latency together, these terms actually refer to different latency speeds which translate to different use cases. For example, while low-latency streaming might work for an experience like online gambling, it wouldn’t be ideal for a video conferencing application.

Build low-latency live streaming with Mux

The Mux API makes it easy to build low-latency live streaming, allowing developers to achieve a latency as low as five seconds. Mux supports Apple’s LL-HLS open standard at scale, which means video works on any device with a compliant player, with no additional plug-ins or proprietary players required.

Low-latency streaming FAQs

What causes latency in live streams?

Latency is introduced at multiple stages: when the encoder processes the stream, during transmission to the ingest server (the "first mile"), when the server decodes and re-encodes for delivery, during CDN distribution to viewers (the "last mile"), and finally when the player buffers and decodes video on the viewer's device. Network conditions, CPU availability, and buffering strategies all contribute to the total glass-to-glass latency.

What's the difference between low-latency and ultra-low latency streaming?

Low-latency streaming typically achieves 10 to 4 seconds of delay using very short segment HLS or DASH, or chunked transfer protocols. Ultra-low latency reduces this to 4 to 1 seconds using chunked transfer HLS/DASH technologies like LHLS or CMAF. The choice depends on your use case—low-latency works well for most interactive experiences, while ultra-low latency is critical for applications like live auctions or gambling.

Is low-latency streaming the same as real-time streaming?

No. Low-latency streaming refers to delays of around 5 to 12 seconds, while real-time streaming (also called sub-second latency) has less than one second of delay. Real-time streaming uses technologies like WebRTC and is essential for video conferencing, but often compromises video quality to achieve minimal latency. Low-latency streaming provides a better balance of quality and interactivity for most live streaming applications.

Which devices and browsers support LL-HLS?

LL-HLS (Low-Latency HLS) is an open standard developed by Apple that works on any device with a compliant video player—no additional plug-ins or proprietary players required. This includes modern browsers, iOS devices, Android devices, and smart TVs. The broad compatibility makes LL-HLS the current standard for low-latency streaming across platforms.

How much does low-latency streaming cost compared to standard streaming?

Low-latency streaming does require more computational resources for encoding and can generate more CDN requests due to shorter segments, but modern video infrastructure providers have optimized these costs significantly. Many providers offer usage-based pricing where you only pay for what you stream, making low-latency accessible without requiring expensive proprietary systems.

Can I reduce latency on an existing live stream?

Yes, but it requires changes to your streaming setup. You'll need to use shorter segment durations or implement chunked transfer protocols like LL-HLS, ensure your encoder and CDN support these protocols, and configure your video player to minimize buffering. The exact approach depends on your current infrastructure and target latency.

Read our guide to learn how to reduce live stream latency.