On May 24th of this year, we held our first customer conference — The Mux Informational, or TMI. Many of those who joined us online have asked how we produced and streamed such an amazing conference. This blog post will give you a little peek behind the curtains and walk you through the details of how we put TMI together.

What we were up against

The TMI production was limited by a number of constraints. One of our first considerations was location. We had chosen The Pearl in San Francisco as the venue for the event several months earlier. Then COVID did its COVID thing in the Bay Area, so with about three weeks to go, we pivoted TMI from in-person with some online viewers to being an online event (with a live studio audience of Mux and invited guests). We loved the venue, though, so we decided to stick with The Pearl for the online production. We worked with an amazing events partner, Magnify Communications, to communicate about the pivot with all of our vendors and help us manage things as smoothly as possible. We shifted the layout and design of The Pearl’s space from being geared toward an in-person conference to being a makeshift studio. The design of the “set,” including the faux wood backdrop, the plants (yes, they were real, and yes, they were rented), and the retro furniture all worked together to make the conference talks look great on camera.

Beyond that, the venue required us to use their preferred audio/video vendor. That meant we couldn’t bring in any of our own audio or video equipment; instead, we needed to work closely with the A/V vendor to make sure everything worked as planned. We also had to use the venue’s existing internet infrastructure. While these circumstances certainly weren’t ideal, especially with the time crunch, we did our best to plan ahead for any contingencies.

With the pivot to producing an online conference, the TMI team also decided to hire a show caller to direct the production. This person would be responsible for telling the A/V team which video input to switch to, when to fade the music in and out, what was coming up next, etc. With the help of the show caller and our communications team, we put together a very detailed run-of-show document — essentially a giant spreadsheet of every action that needed to happen, whether it was cut to a different graphic, fade in music, switch mics, or play a video. The show caller worked off of that run-of-show document during the event, directing the A/V team on exactly what to do and when. The show caller, the rest of the A/V team, and myself were all on our own private wired communication system, allowing us to communicate with each other as the show progressed. One of the strangest things for us during the event was to hear the show caller in one ear, the audio from the live stream in the other ear, and the actual audio at the venue sneaking in around our headphones. It was pretty weird.

The last big hurdle? Time management. We booked the venue for Monday and Tuesday, so we used Monday to build out the space, oversee the A/V vendor in setting up their equipment, and do a very quick run-through with the speakers. This was really important so that our speakers knew their locations and, just as importantly, so we knew their demos would work day-of. Through that testing, we were also able to ensure that the outbound stream worked (important, one might say, for a streaming event). That left Tuesday for running the event, enjoying a happy hour afterward on the rooftop, and then tearing everything back down.

Behind the curtain

Here you can see the whole thing, including three cameras: one on the speaker behind the podium, one on the speaker standing in front of the chair, and a wide-angle shot just in case. We also provided two large confidence monitors for the speakers: one with their presentation slide and one with the speaker notes for that slide.

The cameras, mics, slides, and other graphics were all piped into a vMix station operated by the A/V vendor. This vMix station was responsible for switching between the camera and graphics feeds, mixing the audio and video, and streaming out the resulting video feed. (We’ll talk more about the streaming below.) The on-site video engineer also pre-racked our pre-recorded videos, including the pre-conference video and the interstitials played during breaks.

How we streamed it

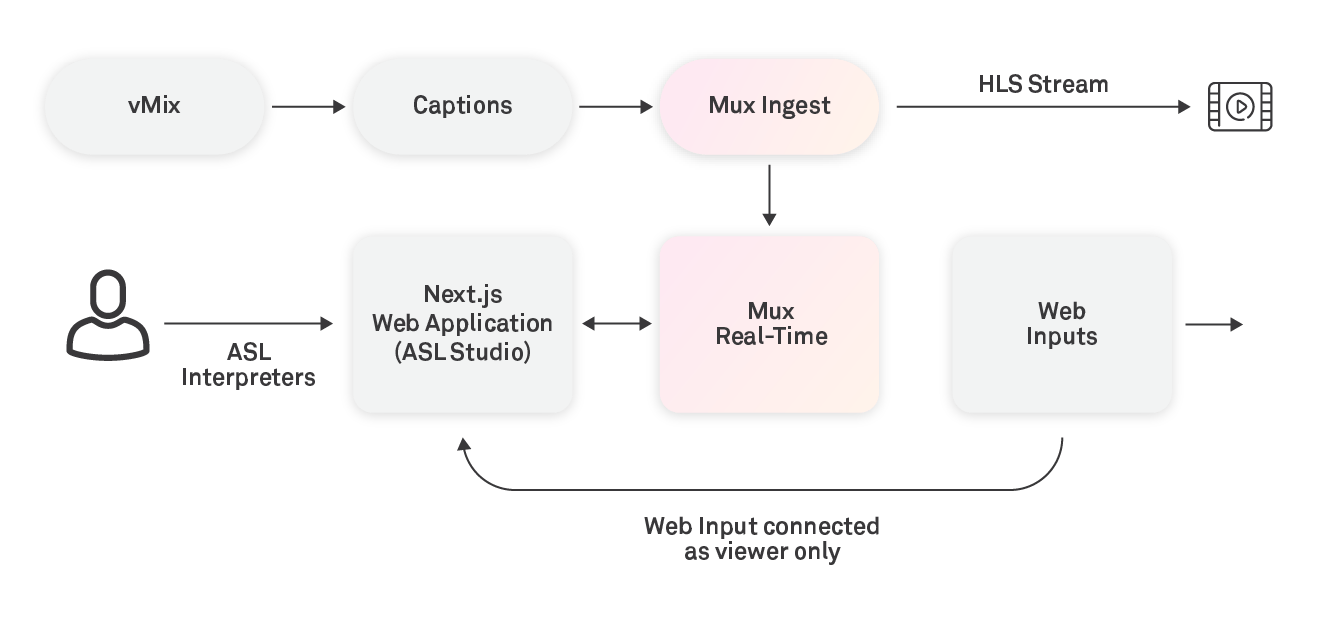

Now that you’ve seen everything that went into the A/V setup at the venue, you might be tempted to think that the streaming portion of the setup was easy. After all, Mux *does* make it easy to stream video online. For reasons that will become abundantly clear below, it wasn’t quite so simple. We wanted to add live captions to make it easier to watch the conference, especially for those who are hard of hearing or for whom English might not be their first language. The streaming setup looked like this:

The stream coming out of vMix went to a captioning inserter in the cloud, where human-generated in-loop CEA-608 captions were added. This captioned stream was then sent into Mux’s streaming infrastructure. Then, we split the stream in two: one stream went toward the online event platform (shout-out to Maestro for the event and Tito for the ticketing), while the second stream used our new Mux Real-Time Video and Web Inputs (announced at TMI!) to create a secondary stream with an American Sign Language interpreter signing the conference.

Both the primary and secondary streams were able to be watched online via Maestro. But instead of using the default Maestro video player, we wired it up to play both streams via Mux Player — which also made its debut at TMI. Surprise, surprise!

Accessible streaming

As mentioned briefly above, perhaps the most audacious part of the entire setup (and probably the most underappreciated) was the ASL interpreted stream. One of our ambitious engineers working on our real-time team took it upon himself to write the pieces necessary to have a seamless experience with the interpreted stream.

The basic platform for our interpreted stream was built out last year for Demuxed 2021. For that event, we developed a recording web app built with Next.js (we’ll refer to this as the ASL Studio) that would allow someone to screen-share the HLS stream into a real-time video call. An interpreter from our favorite ASL consultancy would also join the video call and interpret the stream in sign language. The composited video of the video call was then deployed to Mux Web Inputs and re-streamed for all to view. This was a great way for us to prove out the utility of Web Inputs and also allowed us a really easy way to provide additional accessibility with minimal friction. The only thing the interpreter needed was a web browser and an internet connection — they didn’t need to be at the conference itself to be able to interpret.

When we first created the ASL Studio, we had to develop it through another third-party real-time video vendor, because Mux Real-Time Video didn’t exist yet. Now, thanks to Real-Time video being a headline announcement at TMI , we were able to port the ASL Studio over to Mux Real-Time Video to demonstrate the combined power of Mux Real-Time Video and Web Inputs.

Real-time applications are notoriously hard to build and complicated to refactor. Therefore, porting the ASL Studio from the third-party real-time vendor to Mux Real-Time Video was a true test to see whether we created the right abstraction level when we built Mux Real-Time Video. To our delight, the time spent designing our Real-Time video API paid dividends. We were able to port from the vendor to Mux Real-Time Video in only two days and reduce the code by over 200 lines while also reducing our state complexity. This was the exact story we wanted to tell with our new real-time products. With Mux Real-Time Video, you now have the power to provide incredibly rich video experiences to your customers, without too much code or complexity.

Pressing play on TMI

After a lot of planning, countless meetings, and more than a few sleepless nights, the day of TMI was upon us. Luckily, there weren’t any major surprises to deal with that morning. We started the stream from vMix about ten minutes before the show started, tested to make sure the captions were working, that the sign language interpreters could see and hear the event, and that their video was being properly composited onto the interpreted stream. Then we turned both of the streams live on the events platform, and the show was underway for our attendees.

Other than a couple minor hiccups — we lost internet in the venue a couple times for about half a minute each — the event itself ran smoothly. So smoothly, in fact, that several people in the event chat couldn’t believe that it was being done live. One even dared our emcee to scratch her ear to show that it really was happening live — which we were happy to relay to her.

So, you may be asking yourself — what lessons did Mux learn from all of this? We took away three important lessons from producing the TMI conference. First, we learned that turning an in-person conference venue into a makeshift studio is a lot of work. Apart from just the interpreted stream, there’s a huge difference between doing something simple (like what we’ve done for Demuxed, using volunteers and a hodge-podge of equipment) and doing a full-on professional studio production. Next time we do an online-only event, we’ll lean toward renting a proper studio. The second lesson learned was how important it is to have great partners. Simply put, Mux could not have produced such a great event on its own, especially given the time constraints. Third, we learned that doing a full rehearsal would have been better than an abbreviated run-through. Had we done a full rehearsal, we could have avoided a few minor hiccups related to the length of some of the talks and had better audio transitions between speakers.

We hope that you’ve enjoyed this peek behind the curtain and that you’ve learned a thing or two about how we produced The Mux Informational. If you have any questions, feel free to reach out to us at events@mux.com, and we’ll get back to you.