Since NAB has been cancelled, we have been talking internally at Mux about how we can share what we’ve been working on and what we are focusing on for the rest of 2020. In lieu of 48 hours of standing at a booth talking with hundreds of people, we’re going to do a series of blog posts covering new features and architectural improvements that can help you get better visibility of your video streams. It’s probably not quite as much fun as the Mux party but hopefully you’ll enjoy it.

As these announcements come out, you’ll find a few common themes: these new features are geared towards continual improvement in the developer experience, helping operations teams become more efficient, and increasing the transparency of the video experience all in an effort to improve what’s recently popularized as “observability”.

Even though ”observability” as jargon gets thrown around a lot, it may not be clear what that means for you and your applications. Observability often comes up in conversations with Mux Data users, although not always by that name, so it is probably worth talking about it a bit to help provide some context for future features we will be releasing.

What is Observability?

If you went and looked up the definition, you’d find that an observable system allows the operator to infer the functioning of a system with just knowledge of its outputs. That’s awfully sterile but the basic idea is that you’re able to understand what happens in a technical workflow via detailed logging, tracing, and monitoring that can be correlated together across services. This is valuable so that when something does go wrong you can explain the problem and how the impact propagated through the workflow to the user. “Video Observability” describes the rich end-to-end monitoring of complex video streaming platforms. It encompasses many things, including but not limited to: end-user quality of experience measurements, CDN logs, packager metrics, origin and storage performance, encoder quality monitoring, CDN switching, engagement metrics and churn prediction.

There is a lot to video observability but let’s start with monitoring. Monitoring tracks the performance of a resource over time. Most applications and services use some sort of monitoring. Often it is basic liveliness checks to a service endpoint, sometimes they can be very granular resource monitors. For example, monitoring is set up to track the CPU used on your instances running the ingest services or tracking the current number of viewers on a live stream.

This type of monitoring is extremely valuable and necessary to ensure the overall health of the streaming pipeline or specific services within your infrastructure. If something goes wrong with your service, monitoring can help pin down the instance where the errors occurred and, sometimes, why errors occurred. If, for example, failures spike for your video streaming customers, client failure monitoring is good at helping quickly identify the incident.

But what happens if you get a complaint from a user or you need to get more detail than what was contained in a monitor? This is where observability comes in. If you are able to observe the system you can, ideally, visualize exactly what the user experienced and how your services performed. Rather than only looking at metrics - averages or percentiles - you want to report on the sessions and events that are aggregated into the metrics. That detailed information allows you to more accurately debug issues, get detailed information about the user experience, and better support your users.

Examples

To help make it more clear, let me provide you with a few examples from Mux Data:

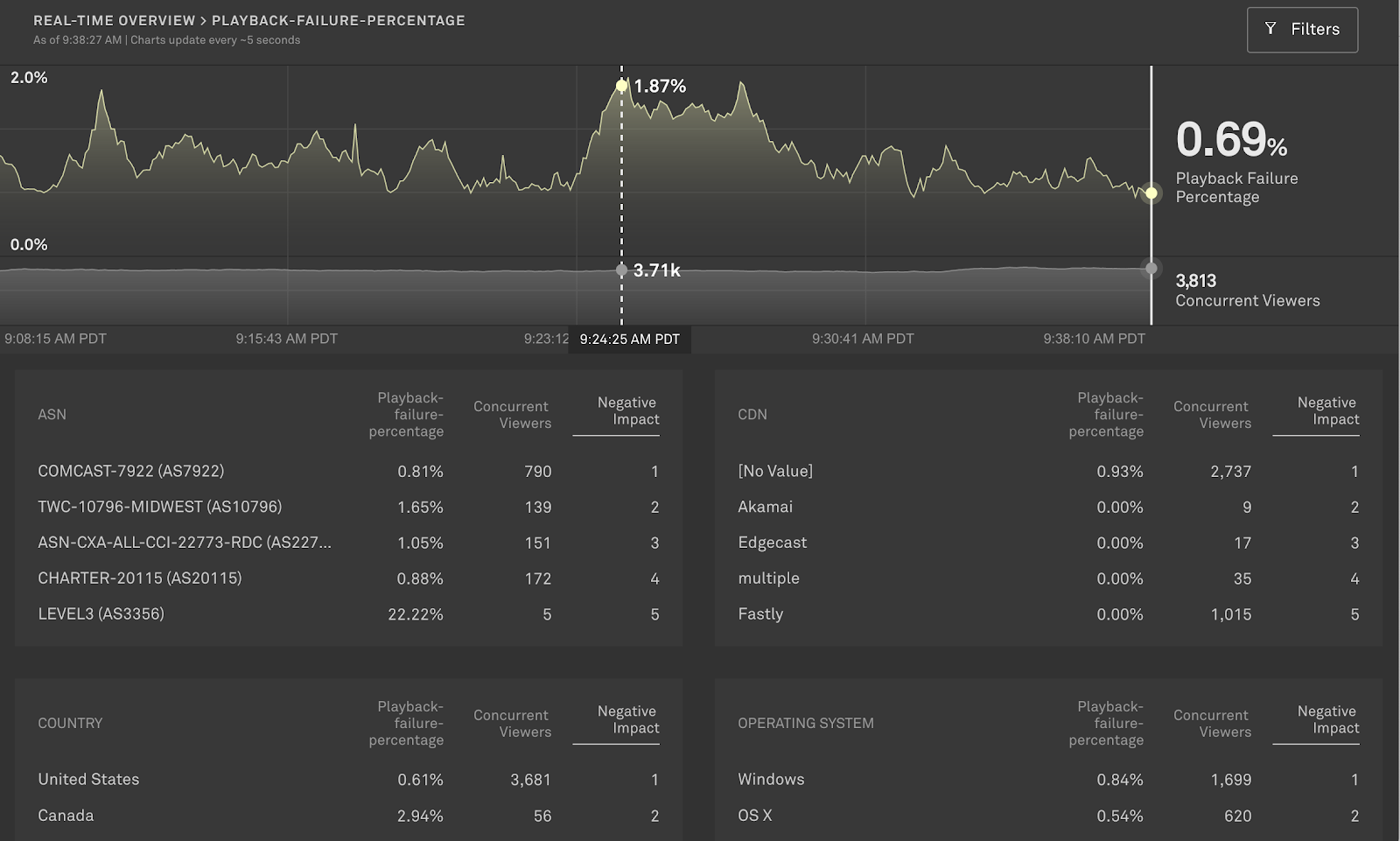

Developers and operators can monitor the performance of their streams in real-time. As viewers watch your videos, the performance metrics will be displayed on the real-time dashboard. For example, you can see the level of rebuffering or playback failures the viewer population are experiencing.

This is what monitoring gives you. You can watch for overall changes in the video quality or be alerted when something goes wrong. You can even filter down to specific metadata to help diagnose and pinpoint where issues are occuring. But you can’t tell specifically what happened in the video session - you aren’t able to infer the functioning of your system for a specific viewer. When a report for a specific user comes in, the monitoring data needs to be pieced together to create a story of what went wrong for a population of users.

To get more details about what actually happened you need to be able to observe the experience - and you need more data and granularity. If something does go wrong for a viewer, you need to be able to see what requests were made, how that error interrupted the viewer’s experience and how it impacted the rest of their video session.

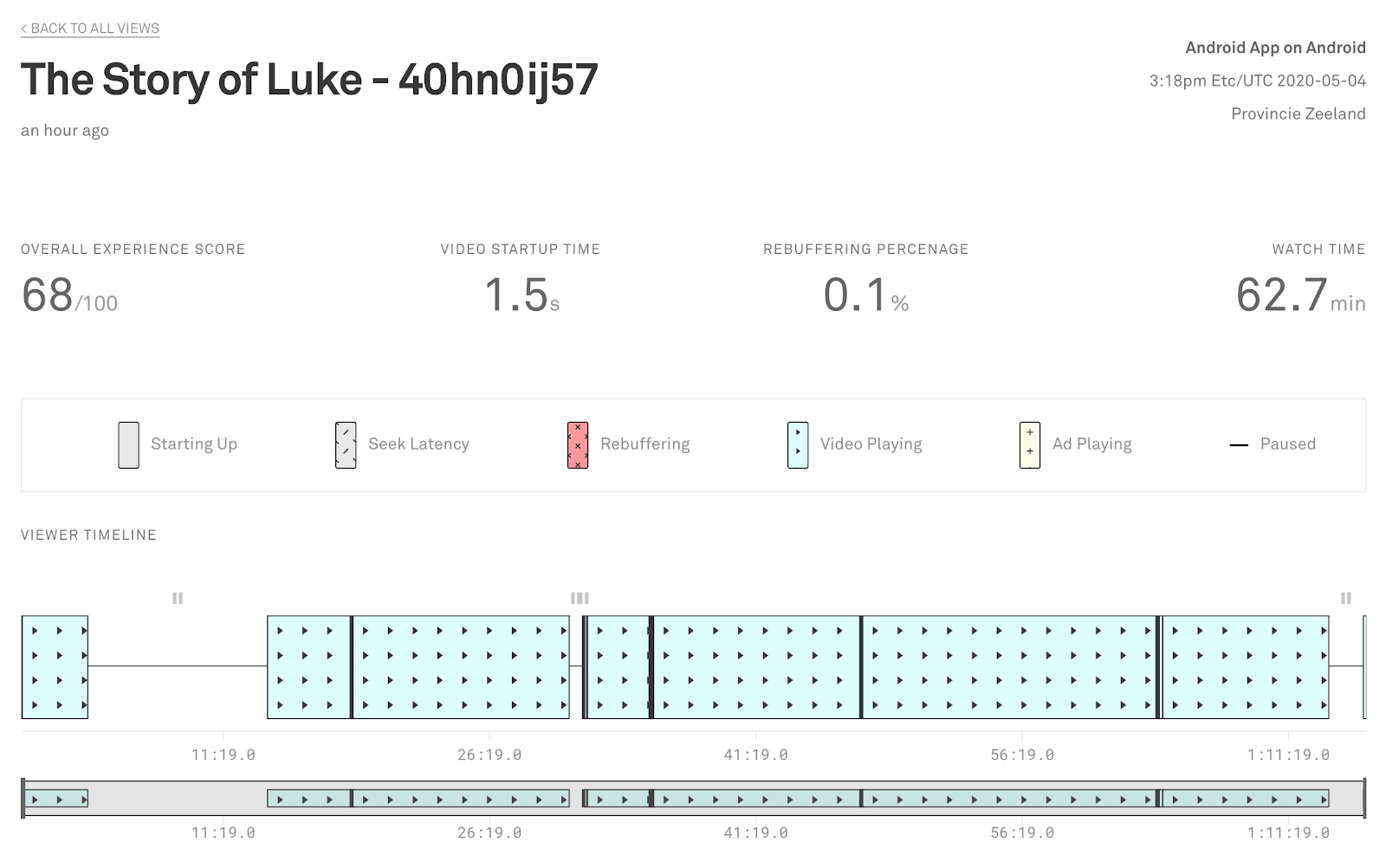

Mux Data allows you to see a detailed experience timeline for each view. From there you are able to see when and how many times rebuffering occurred in a specific video view or when the user finally gave up and dropped off. You can track the ABR segment requests that were made (where supported by the players) and the quality changes that occurred throughout the stream.

View-level granularity and visibility makes it possible to diagnose issues and find areas where the viewer experience can be improved. If a user calls customer service or complains on Twitter, you have more information available to diagnose the specific user’s session rather than having to rely on the aggregated metrics that may lose the problem in the noise of the system.

In addition, the player is only one part of the video serving platform. Almost all video services also have some form of ingest, serving, and caching before the video arrives at the player. Each layer has its own failure modes and monitoring; some failures can be diagnosed by observing the user experience but not all.

We see many Mux customers embarking on large observability projects to consolidate the metrics, logs, and traces generated by their platform components. All of this disparate data is ingested into a common data storage platform with the goal of correlating the video experience with service performance across the tiers, to observe the video request from origin to viewer. Requests across services are joined in the data by a common metadata id, or the IP address and video id. That can make it possible to visualize how, say, a problem with the ingest for a specific rendition of a video impacted a specific viewer. Alerting and monitoring is aggregated and built on top of the data as well.

So far, just about every Mux customer has chosen a different approach to this problem. Some are using log parsing and data warehouse platforms, others cloud services like BigQuery. Some customers are building daily ingestion and processing while others are building real-time pipelines. We want to help make developers successful at this effort so our data is available in a number of different ways: via automated exports, REST APIs, SDKs, or real-time streams. Developers can choose the method that works best for their integration platform and their expertise.

Regardless of the tool you choose to monitor your video, look for one that allows you to monitor and build an observable system. We continue to think that observability is a foundational need to deliver a great video experience and there is always more we can do to make it easier for developers to build more observable, higher quality experiences. We’d love to hear how you are building more observable systems and what Mux could do to make it easier.