We’ve all had those moments: You’re trying to debug something and decided you’ve reached your absolute limit, and the only possible explanation you can come up with is that computers suck, the internet is terrible, and we should all just pack it up and go back to living in the woods. You’re ready to trade in your laptop for a bow and arrow so you can strike out on your own, learn to hunt, and live off the land to never have to deal with computers again.

But then someone has a new idea. Maybe you haven’t exhausted all your options. Maybe you can peel back just one more layer and keep trying to find where this bug is coming from.

If you work in the internet video space, you may have experienced these kinds of bugs more than most. I’m here to tell one such story from Mux that involved coordinating across 3 different teams, buying discontinued hardware off eBay, exhausting every possible industry contact that might have any insight, handcrafting HLS video manifests, and ultimately resolving a bug that had us ready to pack up and head for the wilderness.

The seemingly benign support ticket

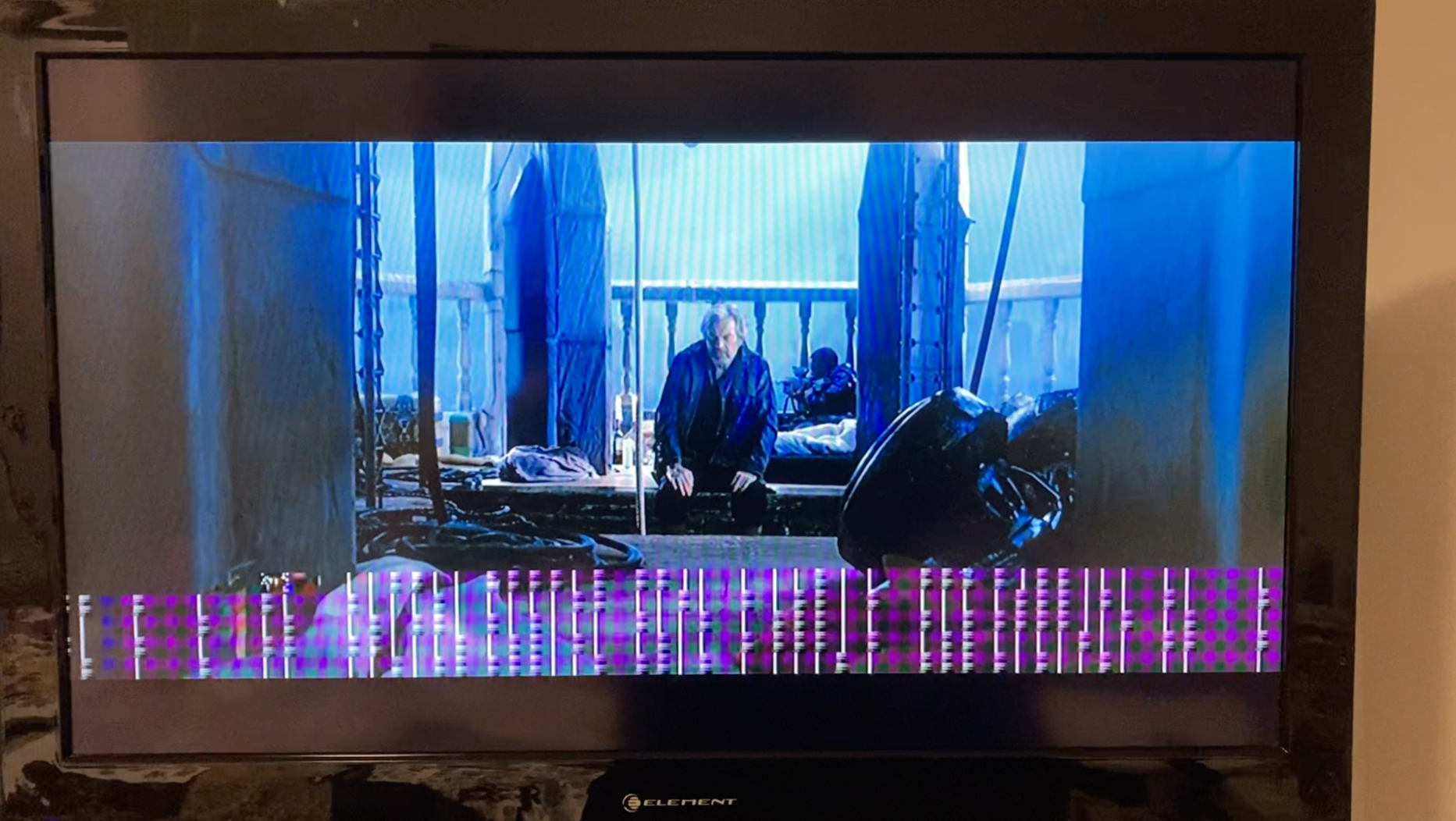

Little did we know that what started as a seemingly benign support ticket would take us down a path of seriously messy debugging. One customer started reporting several issues on Roku devices. Our support team asked, as any good support team would: What software and hardware version are you using? No dice. Things got murkier from there. The problem was described as freezing. The user would play the video, and it would suddenly freeze at a random point.

But the tricky bit was that it was inconsistent. The issue itself would change over repeated tests on the same device. Either it wouldn’t happen at all, or it would happen again but at a different point in the video. And it never happened to anyone at Mux trying to reproduce the issue on web browsers or mobile devices. It was only happening to users reporting it on Roku devices.

The ticket was swiftly escalated to our solution architecture team, who dug in further. Every possible theory we came up with was quickly debunked. The more we dug in, the more bizarre this bug appeared. Testing was completed across multiple Roku devices. This process ruled out any one model, display, stream, cable, or network as the root cause and even accounted for power, radio interference, uptime, and more.

We were at a standstill. It seemed like we’d exhausted all options. We really needed a reproduction case, but we didn’t know how to do it.

So, we developed a sample app specifically for this purpose. We needed to rule out anything specific to the customer’s application. The sample app also provided access to the decoderStats object, which is a newish associative array from Roku that contains information about the onboard decoder during playback. It lists counts for rendered frames, frame drops, and bitstream errors that can be accessed via the Video SceneGraph Node.

Below is a simplified version of the BrightScript code that enabled the decoderStats object:

' trimmed .brs code for enabling decoderStats from a sample app

sub init()

m.Video = m.top.findNode("Video")

setVideoDebugCallbacks()

end sub

sub setVideoDebugCallbacks()

m.Video.enableDecoderStats = true

m.Video.observeField("decoderStats", "onVideoDecoderStatsChange")

end sub

sub onVideoDecoderStatsChange()

print m.Video.decoderStats

end sub

' example output:

' <Component: roAssociativeArray> =

' {frameDropCount: 0, renderCount: 24782, repeatCount: 0, streamErrorCount: 7}Armed with this new decoder stats data, and after a lot of internal discussions, we observed a key insight. Although the problem seemed inconsistent, it was beginning to have a clear signature when it did occur. The decoderStats object was incrementing the bitstream errors while the freezing issue was occurring. This suggested that something in the actual video stream that Roku was decoding had errors in it. Oddly enough, the problem appeared to be cyclical, with a period proportional to the chunk length of the input stream. It was as if there was a busted loop.

At this point, we could take the following 3 things as ground truth to build on:

- The decoderStats errors indicated something was wrong with the stream itself.

- The freezing cycles matched the chunk length.

- We couldn’t reproduce the issue consistently, even on the same device and stream.

Pulling on the only thread we have

Now that we’d observed a pattern, the next step was to strip away as many variables as possible and try to boil things down to the simplest possible reproducible test case. We did this by handcrafting an HLS manifest.

First, we modified the master manifest to have only 1 variant (a normal Mux manifest has 5 variants). Then we downloaded the entire variant list, modified the variant list to use relative URLs, and modified each segment in the variant list.It ended up looking like this:

#EXTM3U

#EXT-X-VERSION:5

#EXT-X-INDEPENDENT-SEGMENTS

#EXT-X-STREAM-INF:BANDWIDTH=2516370,AVERAGE-BANDWIDTH=2516370,CODECS="mp4a.40.2,avc1.640020",RESOLUTION=1280x720,CLOSED-CAPTIONS=NONE

rendition_720.m3u8#EXTM3U

#EXT-X-TARGETDURATION:6

#EXT-X-VERSION:3

#EXT-X-PLAYLIST-TYPE:VOD

#EXTINF:5,

./0.ts

#EXTINF:5,

./1.ts

...

#EXTINF:5,

./734.ts

#EXTINF:5,

./735.ts

#EXT-X-ENDLISTThe decoderStats gave us a clue that something might be wrong with the individual segment files themselves. It was weird, though; normally, when something is wrong with individual segment files, playback will break at the same part of the video consistently. In this case, the video would freeze at different points during playback sessions. But at least we could start investigating something.

We examined the .ts files with ffprobe:

ffprobe -show_packets segment.tsWith ffprobe, we could take careful note of how the media was multiplexed. There were slight issues, like the audio leading the video by 9 packets, but that resulted in less than 0.1s of delay, which was basically inconsequential and not enough of an issue to cause any concern like the bug we were seeing.

At this point, we really wanted to reproduce the issue in a static way. We needed to do this in an environment that allowed us to modify the segments and fix the root cause. Our hope was that if we could reproduce it in a static environment, then we could see what was wrong and try re-muxing the segments in different ways to fix the underlying issue.

More devices

The logical next step was to get our hands on some older Roku models that corresponded to the hardware our customers originally reported. We found 3 used ones on eBay. We spent more time than we’re willing to admit tracking down those devices.

At this point, we had 2 versions of 1 video asset:

- The full version of the asset delivered from stream.mux.com

- A local version of the asset with only 1 variant

With these 2 versions in hand, we tried all the usual suspects to get a more reliable reproduction case:

- We played each version through the Roku Stream Tester while trying to break the playback with aggressive seeking.

- We left the machines running for a long time to try to induce issues with memory leaks, longer uptime, and heat accumulation.

- We tried different (lower amperage) power supplies and USB cables to try to induce a power problem that we theorized could bottom out in hardware decoder errors.

Again, NO DICE. It seemed like no matter what we did, we were still unable to get a reliable reproduction case.

Something in the logs smelled funny

Even though we did not have a repro case, we did observe something in the CDN delivery logs that smelled funny. We couldn’t tell if it was related, but it piqued our curiosity enough to make note of it.

With one of our reproduction tests, we were able to trace the exact requests back to an entry in a CDN delivery log that included HTTP codes, plus Content-Range header values for request and response. We saw very clearly the following:

- Roku client (identified by User-Agent)

- The client requested part of a segment

- We responded with a partial range response

- The client never requested the rest of the segment

This wasn’t necessarily a smoking gun, but it was a bit odd that the client requested a partial segment and then never requested the rest. Maybe it was okay; the player might have been seeking, or switching renditions. But it was a little peculiar. We called these “truncated segments.”

However, the timestamp for the partial segment request lined up with the freezing issue. So this seemed super suspicious. Now we had something we could try to reproduce.

We repurposed the static case from earlier to test the problem. We made a Python script that removed a number of transport stream packets (188 bytes at a time) from the end of every third segment:

if __name__ == '__main__':

base_path = "./vid"

os.chdir(base_path)

blocks_to_chunk_off = 0

for i in range(0,138):

if i % 3 == 0:

inf = open("{i}.ts".format(i=i),"rb")

outf = open("{i}_tmp".format(i=i), "wb")

data = inf.read()

if len(data) % 188:

raise IndexError

data = data[:-blocks_to_chunk_off*188]

outf.write(data)

blocks_to_chunk_off += 30

inf.close()

outf.close()

os.rename("{i}_tmp".format(i=i), "{i}.ts".format(i=i) )A massive breakthrough in the case

We got something that looked very much like what the customer was reporting. By forcing the partial responses for segments, we observed the issue. At this point, we all felt a tremendous amount of relief while simultaneously feeling the frantic energy of finally, possibly, closing in on the root cause.

Finding the smoking gun

Here at Mux, we use a technology called just-in-time (JIT) transcoding to make delivering our customers’ videos faster, cheaper, and future-proof. JIT makes it possible for us to process video 100x faster. Instead of generating end-user-deliverable video as soon as your content is uploaded, we create and store only a single “mezzanine” file that’s optimized for storage and transcode time. Only when a viewer goes to watch a particular segment of a particular video do we go and transcode the content — in real time.

This technology has its advantages, but it also means that, when answering a range request for the first time, we don’t actually know the total size of the content the user is requesting. This means that in our Content-Range response headers, we use the wildcard (for example, Content-Range: 0-2000/*) to indicate that the total size is not yet known. When the JIT-transcoded segment has been finished, subsequent reads will have a fully specified Content-Range.

We now had a credible theory: These Rokus weren’t sure what to do when faced with a Content-Range of an unknown size. Rather than venture off into the unknown and keep requesting ranges of a file until the total size is known, the clients prefer to move on to the security and comfort of future HLS segments where things are more cut and dry and ranges have a known size.

The best fix we could come up with

Sometimes, a bug will be hard to track down but will ultimately have an obvious fix. This was not one of those times. The unanswerable question here (or maybe answerable, depending on who you ask) is: Who is at fault here?

Let’s recap the scenario:

- The player requests an HLS segment using a range request, and Mux responds to that request with HTTP 206 Partial Content and a Content-Range header containing /* in the denominator. This is a valid response.

- The specific segment requested was the first time any client was requesting that segment (so we were doing JIT transcoding).

- The player chokes on this unknown content length, plays back only the returned partial segment, skips over the rest, and jumps ahead to the next segment.

Is Mux at fault here for responding with unknown-length ranges? Or is Roku at fault for not knowing how to handle unknown-length responses? Does it even matter who’s at fault? At the end of the day, there are customers out there trying to watch video on their Rokus, and that video isn’t working. We can sit around and talk about whose fault it is later, but for now we have to figure out a fix.

Mux is an infrastructure company. We do not control the code that runs on Roku devices, so the only possible fix we could control is something from the server side. As a low-impact experiment, we took a calculated risk and made a small change to make sure we simply wouldn’t serve range requests for these User Agents. This was a bit risky — the specifications for smart TVs explicitly require that video origins support range requests, and we were choosing to explicitly NOT support range requests. Could this backfire? Maybe. But clearly the players weren’t always using range requests. And when they were using range requests, they were choking on it and skipping the rest of the segment.

We weighed the pros and cons and decided to go for it. The result: We haven’t had a single new report of this issue, and there’s been no impact on Quality of Experience.

Learnings

This is not the only nor the most difficult bug we’ve had to solve. It’s just the most recent one to make it to the blog. In fact, we deal with bugs like this on a semi-regular basis. And every time something like this comes up, we try to distill the lessons we can take away to improve how Mux operates and continues to deliver value to our customers, so that you never have to worry about stuff like this.

Sometimes this stuff is hard

No doubt, multiple team members working on this had a few sleepless nights. Sometimes this stuff is just pretty damn hard. But if you keep banging your head on the problem over and over again, and you have supporting team members to talk about it with, eventually you’ll get to the bottom of things. At the end of the day, it’s just computers. There’s always an answer, and the harder it is to find, the better it feels when you finally do.

The importance of collaboration

We really could not have figured this out if it wasn’t for the close collaboration between the video engineering, support engineering, and solution architecture teams. It would have been impossible for any one of those teams to figure this out on their own. Engineers from each area had to coordinate, putting all their knowledge and debugging skills together and building off each other’s findings to unearth the root cause.

Thanks for following this story. We hope that every time we spend hours and days and nights squashing a bug like this, we’re doing it so that you never have to worry about it!