Over the last year, our team has been working on a new kind of web experience. We've hinted at its development during brand events and conference talks, and we're finally ready to reveal it on the main stage. Introducing: Web Broadcast.

At its core, Web Broadcast enables web developers to stream complex video experiences from the browser without ever having to think about RTMP, Groups of Pictures, or X264 flags. As a web developer, overlaying a scoreboard on your local softball league is a breeze. Hosting a digital conference but want to encourage interaction from your remote viewers? Not a problem! If it can be done via HTML, CSS, and JavaScript, it can streamed.

Simply put, you build a web page and we'll stream it worldwide.

We’ll discuss the systems lurking in the shadows in a moment, but for now all you need to know is that with Web Broadcasts, you’re always just one request away from live.

With that one request, your website is piped to the standard Mux Live Stream. A stream normally starts with software like OBS, but here we're going to kick things off with a URL.

It all starts with a Broadcast.

http POST https://api.mux.com/v1/video/web-broadcasts \

url="https://mux.com" livestream_id="foo"Broadcast headless Chrome, they said. It’ll be fun, they said!

Almost two years ago, we struck our shovels into the ground as we set out to create our WebRTC product, Mux Real-Time. We realized that an essential feature for Real-Time is the ability to broadcast composited video over Mux’s live stream endpoints. How to achieve that though? Broadcasting headless Chrome felt like a natural place to start, given our previous experience using Chrome for video composition. Little did we know, we were in for one hell of a ride.

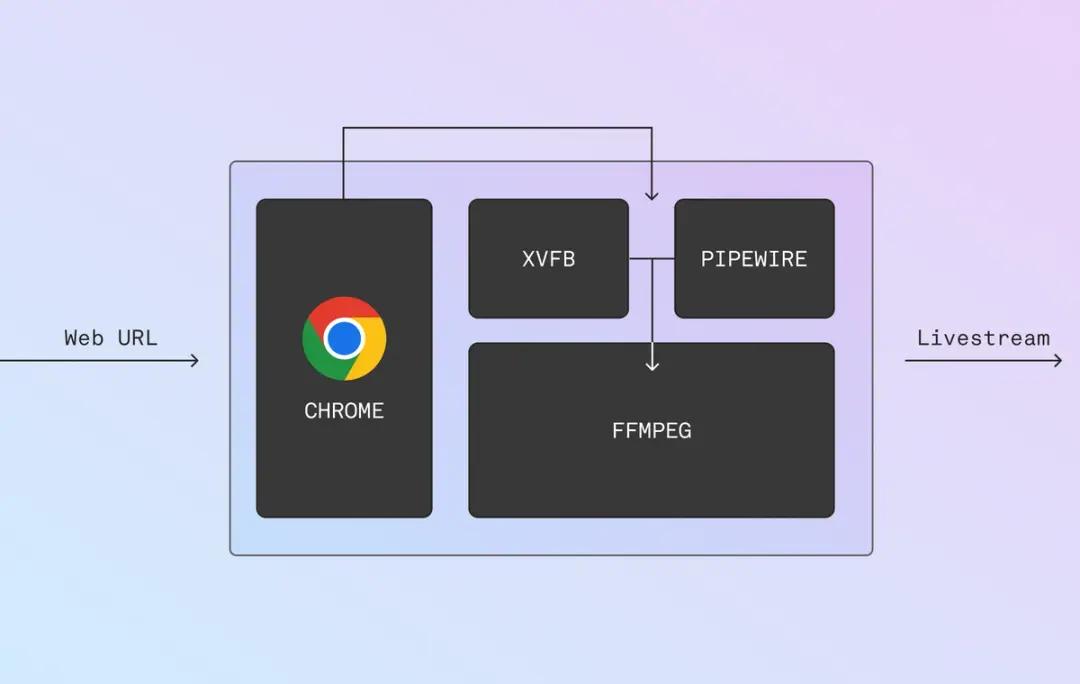

For starters, Chrome can’t run in a vacuum. So we assembled a superstar supporting cast of processes to translate your website to an RTMP stream.

The eyes:

On the video side of things, we use a lightweight X11-compliant alternative called XVFB. This service creates a virtual display for Chrome and buffers video frames for our transcoder.

The ears:

We initially chose PulseAudio since it’s the de facto Linux audio server these days. That’s an obvious choice, right? Cue foreshadowing. We’ve since experimented with PipeWire and found it was a far better experience. More on that below.

The brain:

Of course, we need a system to package the video frames and audio samples and heave those packets across the network. FFmpeg has native X11 and Pulse decoders; another no-brainer!

Drop all those processes in a pot, stir well, and you get something like this:

Chrome displays to X11 and plays audio to Pulse. Ffmpeg then reads from XVFB’s framebuffer and Pulse’s PCM stream and — voila — you have a functional live stream!

Or so we thought… until we spun up a staging node and were met with a stark white loading screen.

First Meaningful Paint

Our first hurdle was timing the start of the live stream. Starting too soon would capture the page loading, while starting too late could result in missing content. Neither option is great.

Enter: First Meaningful Paint (FMP). First Meaningful Paint is a Chrome event that signals when the content of a page is visible to the end user. It’s effectively Chrome’s way of saying “something interesting is on screen right now, and you should pay attention.”

FMP felt like a great event to use as our starting bell, so to speak. So we set up a system to listen to all Chrome events and automate the lifecycle of our processes. Now, when a Web Broadcast starts up, it waits patiently until the page loads before starting the live stream. Smooth sailing, right?

Wrong.

A note from Google: First Meaningful Paint (FMP) is deprecated in Lighthouse 6.0. In practice FMP has been overly sensitive to small differences in the page load, leading to inconsistent (bimodal) results. Moving forward, we’re hanging our automation off Largest Contentful Paint which isn’t sensitive to false-starts like splash screens or loading wheels.

AV Sync

The most nebulous supporting process was actually our first choice of audio server, Pulse. We quickly found that latency was difficult to account for, and the timestamps, even when adjusted for latency, were not monotonically increasing.

In other words, audio timestamps weren’t increasing by a fixed amount. Sometimes the live stream would surge forward, and sometimes it would slow down. To the viewer, that looked like misaligned audio tracks and, occasionally, jumbled speech.

We hacked on the Pulse decoder in FFmpeg to assign timestamps based on an audio sample counter instead of the wall-clock. Normally, audio packet timestamps are assigned by checking the current time, subtracting the amount of time that sample sat in a buffer, and “de-noising” it a bit to smooth things out. Instead, since we knew the exact frequency of the audio and how many samples we’ve already decoded, we could base our timestamps on some simple math. We found limited success there.

That’s when we went looking for alternatives and found PipeWire. PipeWire is a graph-defined multimedia server that was designed for real-time applications by some amazing folks at Red Hat. It comes with Pulse and Jack adapters, and as of version 110, Chromium natively supports it for WebRTC! How's that for buzzwords?

However, there’s no silver bullet, and we had to automate a few state machine interactions around our PCM streams to account for silent streams. Overall though, we're quite happy with the latency controls in PipeWire. Best of all, our timestamps have been rock-solid.

Coming to an API near you

I’d love to say it’s been smooth sailing — but better yet, we put in the long hours so you won’t have to! Web Broadcast is in ongoing development, but seeing as it underpins Real-Time Broadcasts and now the Web Broadcast SDK, we’re excited for you to take it for a spin!

If you’re interested in trying Web Broadcast for yourself, please reach out to web-broadcast@mux.com.

See you, space cowboy.