When writing client SDKs to handle video, we often find ourselves running up against CPU-intensive tasks. When Java/Kotlin is too slow, we use the escape hatch out to C/C++ purely for the purpose of getting better performance. On iOS, Swift and Objective-C tend to be more performant, but there are times we have to invoke general-purpose C/C++ libraries directly.

This doesn’t necessarily apply just to video either. Apps like video games, photo editors, virtual and augmented reality, facial recognition, etc., may require interoperability with the environment outside your normal language, or they may require more performance or different features than your platform ordinarily affords you.

I’ve been hearing a lot of buzz about Rust over the last few years, so I got to thinking: If we’re doing the same kind of CPU-intensive work on iOS and Android, could we use Rust to write the code once and ship it to iOS, Android, and — dare I add a third platform — the web? I don’t have an extensive web-programming background, but I have noticed that WebAssembly has become more widely embraced technology, allowing developers to pair JavaScript with a complimentary C-compatible language.

This all sounds nice in theory, but could it actually work? I was determined to find out for myself; this was the sort of thing I couldn’t trust reading about on the internet. At the very least, maybe I’d learn some things I could use later. And in that spirit, don’t take my word for it — follow along and run the code yourself.

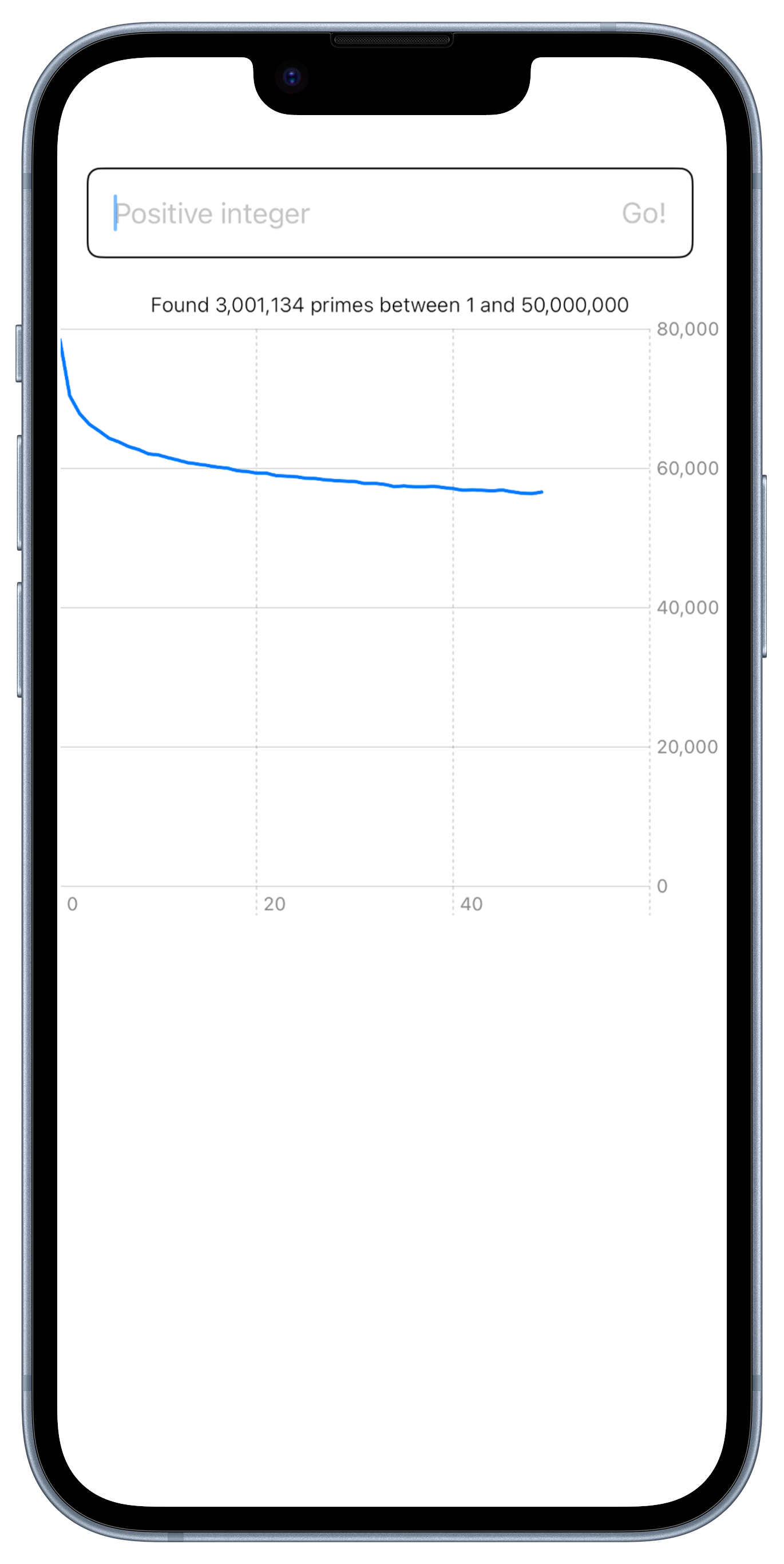

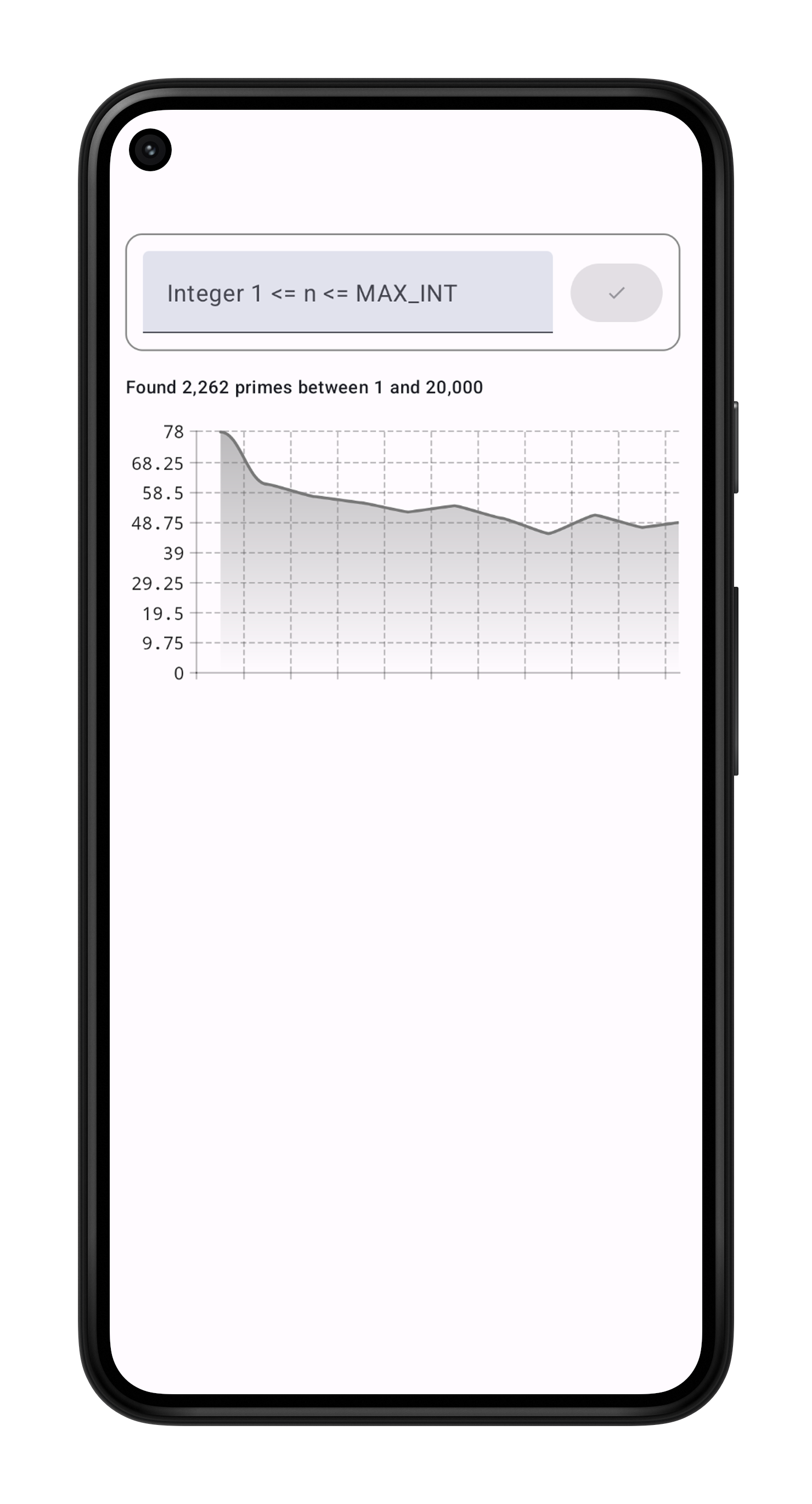

We’re building a prime number–counting Sieve of Eratosthenes app for iOS, Android, and the web. We’re doing the heavy lifting in Rust and compiling it for all three platforms. This should be a performance-intensive enough task to be fun to implement in Rust, without having to learn how to make something wild like a video editor.

Here’s the code so you can join me: daytime-em/rs-hybrid-cross-platform.

The project

The goal of this project was to make something as close to a simple cross-platform hybrid Rust monorepo as I could make. I specifically wanted to answer the following questions for each platform:

- How can I configure Gradle and Xcode to package/link Rust libraries?

- How can I create Rust bindings for the web, Kotlin/Android, and Swift?

- How can I pass complex data structures between a Rust library and an Android/iOS/web app without leaking memory or providing incorrect values?

- How can I invoke a Swift closure, Kotlin lambda, or Javascript function from Rust as a callback? (For example, how do I inject platform-specific code into a Rust library?)

Each platform app has a UI and single-task threading mechanism. The apps depend on a common Rust library called rustlib. Each app uses their own set of bindings to communicate rustlib's API, which has functions that synchronously count primes, with both fast or slow options available.

What I wound up with is a project that uses nothing but the standard tools for Rust and the target environment. The prime number apps I made for this post may only do one silly thing, but the overall project setup seems pretty repeatable, and I think it’d scale well to fit a life-sized project.

My setup meets the needs of a small team or lone developer who wants to take advantage of as much existing automation as possible. Your mileage may vary, especially if you have access to a large team and/or some build engineers.

Rustlib: the cross-platform core

https://github.com/daytime-em/rs-hybrid-cross-platform/tree/main/packages/rustlib

At the center of my overdesigned prime sieve is a Rust library called rustlib with an API based on the facade pattern. The Rust API surface calls into an internal module that does the actual calculation. It’s a lot for an overgrown code example, but in a “real” project, you should approach bindings between Rust and your target platform with the same care you would a public API.

I say this not only because I write libraries for my job but because binding tools themselves often generate risky code. Pretty much no advanced language feature can fit into the C ABI. As a result, everything from object structure and memory management to closure captures and even strings must be translated. This sounds like a complex process, but binding tools make it very tenable.

The best way to contain the remaining complexity of the bindings is to design their surface carefully. You need to rely on encapsulation to protect each side’s memory and state, and you also need to try to keep performance-intensive inner loops, or I/O, all in one language or another. I’ve tried to show this in my example project, which does all of its CPU-intensive looping inside the Rust library. In the coming sections, I’ll show you what I mean.

Rust for Android

links: rust side, android side

The app

The Android app is a Compose UI application built on top of a JNI layer that, in turn, can talk to the inner Rust library that does the calculation. Unfortunately, there’s no tool like swift-bridge to create Kotlin/Android bindings for Rust, so you’ll have to do a little more setup to get a project that is simple to work on.

Android Studio’s “Native C++” project template is almost ready to integrate a Rust project out of the box. For this solution, all you need to do is add the Corrosion Plugin for CMake and configure it to point to our rustlib's FFI package.

Creating bindings between Rust and Kotlin or Java is inherently more complex than for the other platforms because you have to write two bindings: one set of bindings between your Rust library and the NDK (via the Rust FFI) and one between the NDK and the JVM (via the Java JNI). It’s still not bad. You might even enjoy it if you love boilerplate.

Many guides write the JNI and FFI layers in Rust, but I chose to write the JNI side in C++ instead. This might sound silly at first, but I promise it’s a better idea than it sounds.

If you’re willing to give up and use just a little C++ in your bindings, Android Studio will give those bindings fully featured editing, code generation, build automation, code analysis, and declaration jumping between Kotlin and C down to the level of the headers of your FFI lib. As far as I know, Android Studio doesn’t have this kind of integration with Cargo.

The JNI bindings themselves use the same “twinning” pattern found in Android SDK classes like MediaCodec or MediaPlayer. Basically, every Java/Kotlin class with a Rust implementation has a counterpart outside of the JVM, which links the JVM code to the underlying Rust library.

On the Rust side, you’ll need to encapsulate your inner library’s types and functions in some FFI code. Here, I’m creating a struct that can be called from C with a copy of our Rust lib’s result object. Copying immutable data is generally safer and easier than trying to share it. This is true of bindings for some of the same reasons it’s true when working with threads. For instance, copying guarantees that the memory management from one side of the binding can’t accidentally affect memory on the other side. This comes at a small performance penalty, but the stability and peace of mind you gain is worth it more often than not.

Here’s the binding for rustlib’s PrimesResult

#[repr(C)]

pub struct FoundPrimesFfi {

pub exec_time_millis: u64,

pub primes: *mut u64,

pub up_to: u64,

prime_count: usize,

}

#[no_mangle]

pub extern "C" fn free_found_primes(found_primes: FoundPrimesFfi) {

// free the vector of primes.

unsafe {

let vec = Vec::from_raw_parts(

found_primes.primes,

found_primes.prime_count,

found_primes.prime_count

);

drop(vec);

}

}

impl FromPrimesResult for FoundPrimesFfi {

fn from_primes_result(result: &rustlib::PrimesResult) -> Self {

let primes_vec = result.primes.clone();

let mut vec_md = ManuallyDrop::new(primes_vec.into_boxed_slice());

let exec_time_millis =

(result.exec_time.subsec_millis() as u64) + result.exec_time.as_secs();

FoundPrimesFfi {

prime_count: result.primes_count(),

exec_time_millis,

primes: vec_md.as_mut_ptr(),

up_to: result.up_to,

}

}

}Next, access the FFI bindings via the JNI. In this example, I used C++ like I mentioned earlier.

extern "C"

JNIEXPORT jlongArray JNICALL

Java_com_example_jnilib_model_FoundPrimes_nativeFoundPrimes(JNIEnv *env, jobject thiz) {

auto *nativeTwin = (FoundPrimesJni *) JniHelper::nativeTwinOfObject(env, thiz);

if (nativeTwin) {

uint64_t *arrayPtr = nativeTwin->getPrimes();

uint64_t primeCount = nativeTwin->getPrimeCount();

jlongArray out = env->NewLongArray((jsize)primeCount);

__android_log_print(

ANDROID_LOG_DEBUG,

"FoundPrimes",

"YAY! Found primeCount %lu", primeCount

);

env->SetLongArrayRegion(

out,

0,

(jsize) primeCount,

(jlong *) arrayPtr

);

return out;

} else {

return nullptr;

}And all of that powers this simple-looking Kotlin class.

/**

* Primes found by the JNI layer. Cannot be instantiated from the JVM, use PrimeSieve

*/

class FoundPrimes private constructor() {

val primeCount: Int get() = nativePrimeCount()

val upTo: Long get() = nativeUpTo()

val foundPrimes: List<Long> get() = nativeFoundPrimes().toList()

@Suppress("unused") // used from the JNI side

private var nativeRef: Long = 0

external fun release();

private external fun nativePrimeCount(): Int

private external fun nativeFoundPrimes(): LongArray

private external fun nativeUpTo(): Long

}Rust for iOS

Links: rust side, swift side

The iOS app is a simple SwiftUI view that talks to the inner Rust lib via the swift-bridge plugin for Cargo. swift-bridge automatically generates bindings between Swift and Rust with minimal configuration, and its type-bridging protects you from having to directly touch Rust’s unsafe FFI types.

Like the other projects, the iOS project features full incremental compilation and can pick up changes made in the underlying Rust layer.

Using swift-bridge, you define the surface of your Rust lib’s Swift API, which generates the actual bindings. The generated code will be less than idiomatic, so I’d suggest wrapping the bindings in something that’s a little more Swift-friendly.

I can’t recommend swift-bridge enough if you’re going to do something like this. It greatly reduces the amount of code in the binding layer compared to the Android app, making an integration that’s almost as easy as the JavaScript one.

Look, my prime-finding library’s entire surface is like 50 lines.

#[swift_bridge::bridge]

mod swift_ffi {

extern "Rust" {

type FoundPrimes;

#[swift_bridge(swift_name = "primeCount")]

fn get_count(&self) -> usize;

#[swift_bridge(swift_name = "upToNumber")]

fn get_up_to(&self) -> u64;

#[swift_bridge(swift_name = "primesVec")]

fn get_primes(&self) -> Vec<u64>;

}

extern "Rust" {

type SimplePrimeFinder;

#[swift_bridge(init)]

fn new() -> SimplePrimeFinder;

#[swift_bridge(swift_name = "findPrimesWithSimpleSieve")]

fn find_primes_slow(&self, #[swift_bridge(label = "upTo")] up_to: u64) -> FoundPrimes;

#[swift_bridge(swift_name = "findPrimesWithFastSieve")]

fn find_primes_fast(&self, #[swift_bridge(label = "upTo")] up_to: u64) -> FoundPrimes;

}

extern "Rust" {

// Just playing around

#[swift_bridge(swift_name = "printViaPrivateDelegate")]

fn rustlib_just_print();

#[swift_bridge(swift_name = "printViaRedeclareMethod")]

fn print_something(); // symbols in modules must be declared with `use as`

#[swift_bridge(swift_name = "didIAppear")]

fn try_regenerating_bindings(); // You can't create bindings for functions with implementations

#[swift_bridge(swift_name = "invokeAClosure")]

fn append_by_cb(

#[swift_bridge(label = "startingWith")] start: String,

callback: StringAppendCallback // Sending Closures to Rust this way is not supported

) -> String;

}

extern "Swift" {

// Closures can't currently be sent from Swift, so you need to define a swift type

// and bind a callback function like this to call callbacks from Swift

type StringAppendCallback;

// Note that this name must match the *label* of the swift param, not its name

fn invoke(self, from: String) -> String;

}

}Here, I’m wrapping rustlib’s result type in an opaque Swift type that contains a pointer to the vector of found prime numbers. The SimplePrimeFinder class has methods to create instances of FoundPrimes, of which it hands ownership to Swift. The implementation isn’t all that exciting to read but you can see it here if you’re interested.

When creating bindings using swift-bridge, you have some decisions to make about how your Rust library shares its memory with ARC. In this example, I created an opaque type that owns a private pointer to our Rust result data. When requested, that type can copy the list of found primes out of Rust for the client. Just like the Android client, copying data across the boundary is the easiest way to ensure the memory will be valid. The Rust compiler even knows this and forces you to copy complex objects like our Vec of found primes.

calculateTask = Task { [self] in

let foundPrimes = SimplePrimeFinder().findPrimesWithFastSieve(upTo: UInt64(num))

print("Found \(foundPrimes.primeCount()) between 1 and \(foundPrimes.upToNumber())")

let calcRes = CalculationResult(

foundPrimes: foundPrimes,

approxDistribution: groupPrimes(

regions: 50,

originalUpTo: Int(num),

primes: foundPrimes.primesVec().copyToSwiftArray()

)

)

Task {

await MainActor.run {

self.calculationResult = calcRes

self.calculateTask = nil

self.calculating = false

}

}

}Once the rust bindings have been written and implemented, the rest is simple. The symbols defined in the Swift binding can be called directly from Swift. In this project, I wrapped the calls to the Rust library’s symbols inside of a Model object.

Rust for WebAssembly

links: rust side, react side

The web app is a Next.js application that loads rustlib as a WebAssembly module created using wasm-bindgen and wasm-pack to generate a surface that can be invoked from JavaScript or TypeScript. wasm-bindgen’s generated bindings are very usable as-is, but I still wrapped them to contain the logic that loads the WebAssembly module.

The final result is a three-package setup. On the lowest level I have the Rust library, next another Rust library to provide Javascript bindings, and at the highest level a web app based on create-react-app. The React app depends on the bindings module, and it can invoke the prime sieve and show a UI. The only special configuration I had to do was add CRACO to my react app to configure Webpack to bundle my WebAssembly.

Of all the binding tools available, I found the WebAssembly Rust binding tools to be the simplest to use. They produce pretty good JavaScript APIs, and wasm-bindgen has many implicit behaviors that enable and optimize sharing memory between the JavaScript runtime and the Rust allocator.

In order to communicate with the Javascript world, you do have to map your Rust types and functions to a javascript module. The wasm-bingen tool can generate the module for you from a properly-annotated Rust crate. For example, here’s the JS binding for one of my Rust functions.

/ ====== Functions we provide to JS

#[wasm_bindgen(js_name = fastFindPrimes)]

pub fn fast_find_primes(up_to: Option<i32>) -> JsValue {

let expected_up_to =

up_to.unwrap_or_else(|| panic!("An integer up to {} must be provided.", std::i32::MAX));

let internal_result = internal::fast_sieve_clock(

expected_up_to as u64,

&WasmClock::new() // remember, not all normal std libraries are available in wasm.

);

let js_obj = Object::new();

let ret = js_obj.deref();

Reflect::set(

ret,

&JsValue::from_str("execTimeSecs"),

&JsValue::from_f64(internal_result.exec_time.as_secs_f64()),

)

.unwrap();

Reflect::set(

ret,

&JsValue::from_str("primeCount"),

&JsValue::from_f64(internal_result.primes.len() as f64)

)

.unwrap();

let result_arr = Array::new();

for prime in internal_result.primes.iter() {

result_arr.push(&JsValue::from_f64(*prime as f64));

}

Reflect::set(

ret,

&JsValue::from_str("foundPrimes"),

&JsValue::from(result_arr),

)

.unwrap();

ret.clone()

}wasm-bindgen can also generate classes, duck-typed interfaces, and TypeScript types for your web API, but I didn’t use any of that for this example.

This interface can be called directly from your web app. I recommend wrapping access to your WebAssembly library, as the code is not one-hundred percent idiomatic. It’s pretty darn close though. For example, here’s the call site for our prime-counting library.

/**

* Nice return type to wrap the JSValue we got from rust.

* wasm-bindgen can generate things like this, but they

* come with some small strings attached. I'm adding the

* type here because it's simpler

*/

export type PrimesResult = {

primeCount: number;

foundPrimes: number[];

approxDensities: number[];

requestedUpTo: number;

};

export async function calculate(n: number): Promise<PrimesResult | undefined> {

try {

const wasm = await importWasm();

console.log("calculate(): Imported wasm module obj", wasm);

const result = wasm.fastFindPrimes(n);

console.log("Calculated some primes", result);

return {

primeCount: result.primeCount,

foundPrimes: result.foundPrimes,

requestedUpTo: n,

approxDensities: groupPrimes({

regions: 50,

upTo: n,

primes: result.foundPrimes,

}),

};

} catch (e) {

console.error("failed to import wasm ", e);

return undefined;

}

}Of the three platforms, the wasm binding tools are definitely the easiest to set up and use.

Conclusion

I was expecting to love Rust a little bit, but I was shocked at the quality of the tools available for incorporating Rust into your apps. In all three cases, it’s just a simple matter of configuring your tools and writing some boilerplate. As scary as it may sound to reach outside the comfort zone of your favorite language, you might find that Rust’s vibrant open-source community has already solved the worst of it for you.

Tools like wasm-bindgen and swift-bridge take care of all the difficult and delicate memory management normally associated with cross-language solutions. If you have a good understanding of Rust and of your target language, then you already have all the knowledge you need to start using Rust to accelerate your apps. Happy coding!