Mux makes adding video to your app or website as easy as making a single API call. But behind the scenes is a large multistep process to analyze and transform the video into something that can be easily consumed by a device. This process is commonly called a media “pipeline”.

The pipeline is a series of data analysis or transformations performed on each frame of audio or video. At each stage, a frame is processed then given to the next stage to perform the next operation. A simplified pipeline may look like this:

Each one of these steps is a large topic on its own, but today we’re going to look at one of the more overlooked parts of this pipeline - Packaging.

The packager’s main job is to take the output of the audio and video encoders and insert timing and signaling information needed to play back the media at the correct rate while keeping audio and video synchronised. The end result is a file or stream that is packaged into a “container” that can be consumed by a player, for example an mp4 file or HLS stream.

Apple added support for fragmented mp4 files in HLS a few years ago, but not all devices have caught up to this change. So the majority of streams still use the older transport stream (often called TS) format. TS seems like an obscure format, but for people in the broadcast or cable tv world, the format is ubiquitous. This ubiquity meant that there was a lot of support for TS in hardware decoders, and I speculate that’s likely why Apple chose TS for the HLS in the first iPhone generations and why it's still very common today.

TS has some strange properties. It was built for a pre-ethernet world and includes features such as lost and out of order packet detection and remote time synchronization. These features are required for digital over-the-air broadcasts, but on the internet this is usually handled via TCP and high precision clocks in every device. TS also uses a fixed packet size of 188 bytes with each packet starting with a sync byte to identify the start of a packet. Again, great when joining a multicast stream at a random location, like when changing the TV channel, but not necessary when video is saved as a file and pulled over HTTP, as is the case for HLS. The downside of not using these features is that they still take up space in the file. For files with high bitrates this is not an issue, but in low bandwidth environments the overhead can be significant. This is unfortunate as a constrained bandwidth environment is precisely where bits are most in-demand.

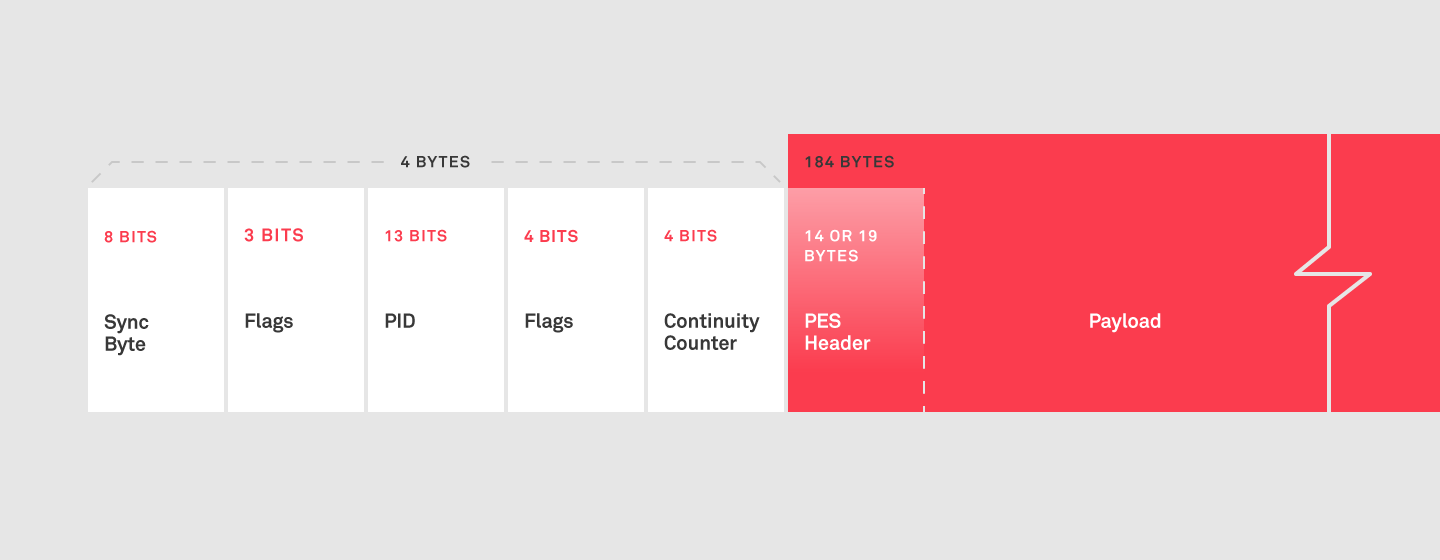

Each 188 byte TS packet has a 4 byte header. This header contains the sync byte, some flag bits, a packet id (or PID, which identifies a unique audio or video stream), and a continuity counter (used to identify missing or out of order packets). Every frame then has a prepended Packetised Elementary Stream (PES) header. The PES header is a minimum of 14 bytes, (or 19 bytes if the frame decode time does not match the presentation time, i.e. B frames) and encodes the frame timestamps among other things. Therefore, the first packet has at most 170 bytes available while subsequent packets have 184 bytes available. If a frame is less than 170 bytes, it must be padded to consume the full packet. If a frame is 171 bytes, a second packet is required and thus 376 bytes (188x2) is needed to transmit the 171 bytes of payload, more than doubling the required bandwidth. In reality, frames under 170 bytes are not very common. However at bitrates under 1Mbps, a 10% or more overhead is not uncommon.

A real world example

We took our favorite test video not featuring an anthropomorphized leporidae and encoded it to HLS via FFmpeg using the following command:

ffmpeg -i tears_of_steel_720p.mp4 -vcodec libx264 -preset faster -x264opts keyint=120:min-keyint=120:scenecut=-1 -b:v 500k -b:a 96k -hls_playlist_type vod ffmpeg/ffmpeg.m3u8

The final combined stream size was 58196152 bytes with a packaging overhead of 6.24%. Not that bad really, considering 2.13% (184/188) is the theoretical minimum, discounting PES headers and padding.

But can we do better? If so, the bits saved could then be used to improve the quality of the video and/or improve user experience by reducing buffering. The first step in improving anything is measuring it. Registered Apple developers have access to the HTTP Live Streaming Tools. These tools have two issues: they are MacOS only and it seems recent versions no longer display the packaging overhead. To resolve this, we have open sourced our muxincstreamvalidator tool. At the time of this writing, this tool only reports packaging overhead but may be expanded later to include more functionality. This was the tool used to measure the overhead from FFmpeg above.

To reduce overhead we can take advantage of a few properties of the encoded media bitstreams. Most audio codecs encode using a fixed sample rate and sample count per frame. AAC audio always uses 1024 samples per frame. So at 48000Khz, every frame is 21 ⅓ milliseconds. Because frame durations can be determined by the decoder without timestamps from the PES frame header, we can pack more than one audio frame per PES header, thus reducing the PES overhead and minimizing the padding necessary in the final TS packet for the frame. However, video frames do not have a derivable time stamp so packing will not work. MPEG video codecs do contain a specific bit sequence called a start code that can be used to identify the first byte of each frame. Therefore, a decoder does not need the container to signal the exact position in the stream where each frame starts. When we have a final payload that is less than 184 bytes and requires padding, we can truncate those extra bytes, thus requiring zero padding, and carry the bytes forward into the next frame. Unfortunately, there is still nothing we can do for video frames under 170 bytes.

Mux's transcoder uses these techniques (and a few others) to minimize overhead. We ingested the same tears_of_steel_720p.mp4 video into Mux and measured the overhead with muxincstreamvalidator.

PID 0: SID 00, size 27636, overhead 100.00%

PID 256: SID e0, size 45831204, overhead 2.96%

PID 257: SID c0, size 9443616, overhead 4.51%

PID 4096: SID 00, size 27636, overhead 100.00%

-------------------------------------------------------------

TOTALS: size 55330092, overhead 3.32%The transport stream contains 4 PIDs. PID 0 is always the Program Association Table (PAT). It encodes the PID of the Program Map Table (PMT), among other things, 4096 in this case. The PMT then encodes the PID of the audio (257) and video (256) streams. The PAT and PMT are 100% overhead because they contain no media, just metadata. The final stream was 55330092 bytes with 3.32% overhead. Much closer the the 2.12% theoretical minimum.

To make sure this was an apples-to-apples comparison, we remuxed the Mux encoded stream using FFmpeg and measured the result.

ffmpeg -i ./mux/manifest.m3u8 -codec copy -hls_playlist_type vod remux/remux.m3u8

PID 0: SID 00, size 27636, overhead 100.00%

PID 17: SID 00, size 27636, overhead 100.00%

PID 256: SID e0, size 47416420, overhead 6.20%

PID 257: SID c0, size 9544008, overhead 5.59%

PID 4096: SID 00, size 27636, overhead 100.00%

-------------------------------------------------------------

TOTALS: size 57043336, overhead 6.24%FFmpeg includes an extra "Service" PID (17), but otherwise looks similar, except for the additional 1713244 bytes of overhead.

We attempted an experiment to increase the bitrate by the percentage saved to produce files of a similar size of the less efficient packagers but with improved video quality. The results produced VMAF scores below the 3-point threshold which is considered a visual improvement. Hence using the savings to improve QoS for marginal users seems to be the better option.

If you would like to perform these tests on your own, all the files have been posted to Mux's public GitHub.