Growing up in England I’ll openly admit that American Football isn’t my first choice of sport, however, by anyone's standards, American Football is huge. Last year Amazon started live streaming Thursday Night Football on its Prime Video platform. A couple of months ago, they announced that they had extended this agreement for another two years.

Recently Amazon started competing with itself for viewers by hosting Thursday Night Football on both Amazon Prime Video as well as Twitch (Reminder: Amazon acquired Twitch in 2016 for around $970M). As far as I can tell, this is the first time a major (non-esports) sporting event has been hosted on Twitch.

My colleagues in the Mux San Francisco office are obsessed with Fantasy Football, so when I found myself watching Thursday night football with the team I figured, “Hey, what’s the stack behind this?” and “Is the Twitch Stream just the same Amazon Prime stream playing in the Twitch player?”

So let’s dig into some sportsball streaming architecture!

Twitch (Left) vs Amazon Prime Video (Right)

Author’s Note: This is a pretty long read - if you want to skip to the summary, you can do so here.

How?

Working out the technology stack behind a streaming service isn’t actually that hard, especially after years of working in the industry debugging bizarre legacy customer setups while aiding their transitions to new systems. Everything I do here you can do yourself, all you need is a browser, curl, the bento toolkit, and a good working knowledge of internet video.

Amazon Prime - Streaming Stack Teardown

We’ll focus mainly on the strategy for desktop browsers since its the easiest platform to debug. So let’s get stuck in, load up the Amazon Prime player in Chrome, and fire up the network inspector.

What are we looking for? Let’s start by assuming that Amazon and Twitch are using well established streaming technologies such as HLS or MPEG DASH. Both these technologies rely on a text file referred to as a “manifest” to describe the video presentation and to let the browser know where to fetch the segments of the video from for playback.

For HLS we’d usually be looking for .m3u8 files in the network inspector and for DASH, we’d be looking for a .mpd request, or sometimes, just an .xml request. In this case we’re lucky, it seems that Amazon Prime is using MPEG DASH with the more traditional .mpd file extension for their stream.

If we filter the requests to .mpd and watch the stream for a while, we notice that the manifest is re-requested every few seconds. This is so that the player can know when the latest chunks of content are available and where to get them from. We can learn a lot about Amazon Prime’s video delivery environment by looking at the manifest. Let’s take a look at the (slightly shortened) manifest below.

Packaging: MPEG DASH, H.264 encoded in 2 second fMP4 Fragments

If we take a look at the some of the representations (aka renditions) in the manifest, we see the codecs and media packaging technologies that Amazon is using. We can check the “codecs” string to understand what codecs are being used and the “mimeType” to check the packaging approach. The codec string actually contains quite a lot of information encoded as a RFC 6381 string including exactly what profile of H.264 is being used. It’s very useful to transfer this information in the manifest because it allows you to use APIs to make sure this particular version of the codec is decodable on a device. Amazon is using the common combination of H.264 for video and AAC for audio. Amazon uses 9 video renditions for their desktop player ranging from 288p up to 720p30p @ 8 Mbit. They also expose 1 demuxed audio rendition in 4 different languages.

Checking the SegmentTemplate in the manifest we can see that the fragments are being served with a .mp4 file extension (common, but some people choose to deliver .m4f for their fragment extensions instead). If we change our filter to look for “.mp4” while we’re watching content we’ll see segment requests happening every few seconds. Amazon is also delivering audio and video segments separately (demuxed).

We can also use the SegmentTemplate to calculate how long the segments of video are. Starting by looking at a video Representation we see the frameRate is set to "30/1". Next we can see the Timescale of the Representation is “30”. When we couple this with the declared duration of each segment in the SegmentTimeline (d=”60”), we can work out that each segment contains 60 frames @ 30 FPS, so 2 seconds of content. Segment length is important when you’re streaming live video because it heavily affects the latency of your end-to-end delivery. Two seconds is realistically the lowest segment duration feasible without starting to adversely impact encoder performance, and end user buffering experience.

Ad Insertion: Multiple DASH Periods

The top level elements we see in the DASH Manifest are Periods. Scrolling down through the manifest we see that there are several top level period entities. Multi-Period MPEG DASH is a way of achieving advertisement insertion in live video streams. In this case we see long periods of content followed by multiple, shorter periods which contain adverts.

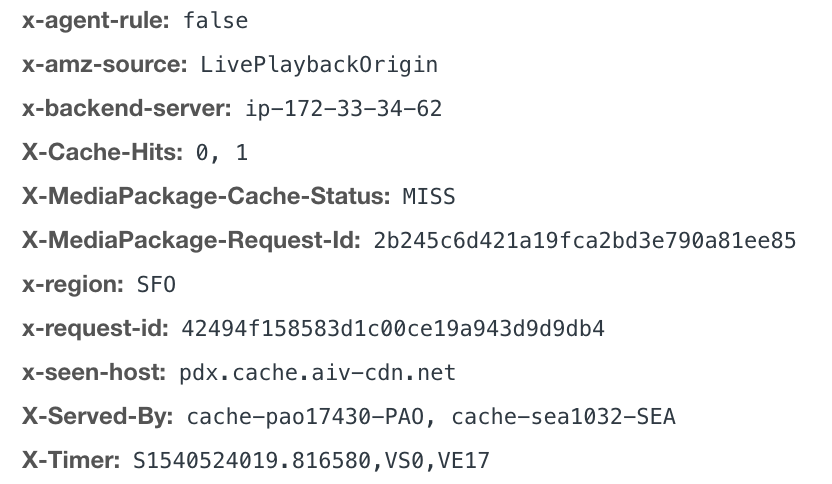

There’s more we can learn from the HTTP requests we looked at earlier, specifically let’s look at the X-headers on the Segment responses.

What’s interesting here is the X-MediaPackage headers, they’re a dead giveaway that Amazon is using AWS’s Elemental MediaPackage product. We can look at the headers again on the manifest requests to see that the manifests are being served by AWS’s Elemental MediaTailor product. MediaTailor is a manifest-manipulation based Server Side Ad Insertion (SSAI) solution, so we now know how Amazon is performing ad-replacement (and probably ad-targeting) for Thursday night football.

DRM: CENC Encryption

From the manifest we can also see how Amazon is protecting its content - we can see two distinct ContentProtection blocks nested within the Representations. The ContentProtection blocks define the different methods available to the client to decrypt the content.

The two UUIDs above are well known in the industry - they tell us that Amazon is using the common combination of Widevine (edef8ba9) and Playready (9a04f079). This will achieve them fairly comprehensive coverage across common desktop and mobile platforms, as well as most popular OTT devices.

CDN Delivery: Akamai and CloudFront

Looking at Amazon’s manifest we can see that the BaseURL for media segments doesn’t have a URL scheme at the start of it. This means that the video segments are being served through the same CDN infrastructure as the manifest. Going back to our original filter to find the manifest file we see that the hostname for the manifest (and thus, segments) was https://aivottevtad-a.akamaihd.net. akamaihd.net is an Akamai owned edge hostname, which lets us know that for this view Amazon is using Akamai in this case to deliver their video segments to end users.

Now, this is interesting because I would have expected to see AWS CloudFront as the primary media CDN here. It’s well known in the industry that AWS is pushing CloudFront very heavily in the media sector, particularly in the USA where it’s best connected when compared against the worldwide offering. I did check around with a few other people watching the same streams and did spot at least one stream which was being served from CloudFront rather than Akamai. It's likely that there are also more CDNs in the mix that we haven’t spotted yet. During the US Open I did also see that Amazon Prime was using Limelight to deliver segments in the UK.

It would make sense given Amazon Prime Video’s scale and maturity that they would be using multiple CDNs to provide some level of redundancy. However, it's worth noting that given their current strategy of using relative hostnames within their manifests, and not using any form of DNS indirection on top of their edge hostnames, that mid-stream CDN switching to maintain QOS wouldn’t be possible in the current architecture (or at least would require fairly messy player modifications). It’ll be interesting to keep an eye on their approach here and see if they choose to adopt mid-stream CDN switching, and if so, if they buy off the shelf or build their own solution.

Video Encoder: AWS Elemental Media Live

So far we’ve verified that Amazon is leveraging a good amount of their own AWS Elemental based software solutions here. We’ve checked their packaging and ad-insertion technology, but don’t know anything about the encoder (let's face it, I’d be shocked if it wasn’t Elemental). This is a little harder to fingerprint but there is one easy thing we can do to get some hints.

For MPEG-DASH streaming an initialization segment is used for each video or audio rendition to setup the decoder on the client side. We can see the URLs of these mp4 segments in the DASH manifest under the SegmentTemplate initialization attribute. We can download one of the initialization segments and dumped the contents using Bento’s mp4dump tool. I don’t want to go into too much detail on MP4 structure (though I did give a talk at Demuxed a couple of years ago on this very subject), but we can see the following interesting atoms in the moov/trak/hdlr box hierarchy:

mp4dump --verbosity 3 amazon-init.mp4

// Trimmed for space saving

[moov] size=8+1693

[trak] size=8+595

...

[mdia] size=8+495

...

[hdlr] size=12+48

handler_type = vide

handler_name = ETI ISO Video Media HandlerGenerally, the hdlr box is set by the encoder to something identifiable. In this case “ETI” is the signature set by Elemental encoders. Exactly what it stands for I don’t know, “Elemental Transcode… Something” would be my guess. Realistically, I’d think that Amazon will again be using AWS Elemental MediaLive for their live encoding.

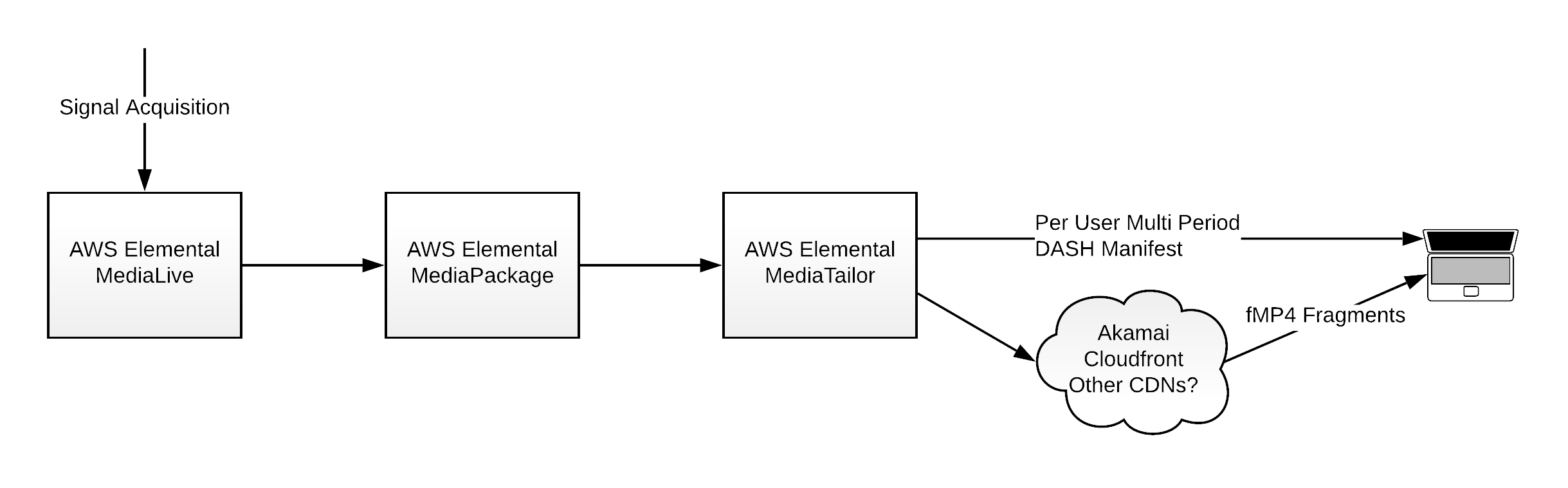

Amazon - Hypothetical Architecture

Warning: Some conjecture ahead.

When we put everything together that we’ve learned above we can come up with a pretty comprehensive picture for how Amazon is architecting their video delivery stack for Thursday Night Football - let’s take a stab at an architecture diagram. We’ll assume Amazon is using at least the CDNs we know about and probably more.

Realistically, on something this high profile I’d expect there to also be some level of redundancy built into the architecture too, probably multiple independent legs running in different AWS regions. We already know there’s some form of stream-start CDN switching going on but I’m sure Amazon have gone at least a few steps further than this.

Honestly, this is a pretty solid live streaming architecture and really reflects Amazon’s commitment to their own AWS and Elemental product catalog. The big surprise to me here was that Akamai is front-and-center in their delivery stack. Even if it does seem to be load balanced with CloudFront, there’s certainly a lot of data flowing through one of CloudFront’s biggest competitors.

Twitch - Streaming Stack Teardown

So what is Twitch up to? Is it just the same content but in their player? Well this is going to be more interesting.... But also contains dramatically more conjecture on my side. It’s well known from talks by Twitch engineers that Twitch built most of their video infrastructure in-house, which makes it harder for us to compare responses to known platforms in the industry, but let’s see what we can figure out.

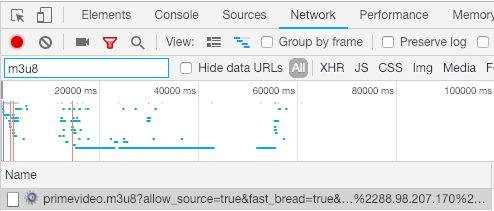

We’ll take the same strategy as last time firing up the game in Twitch on Chrome and looking for a manifest request. Twitch is a big fan of the Apple HLS format for delivering their content so let's start by looking for a request for a .m3u8 file.

First try! So immediately it seems unlikely that Twitch is just pulling the Amazon Prime Video stream and rebranding it. Let’s take a look at Twitch’s master manifest and see what we can learn.

It's a pretty standard HLS master manifest with a little Twitch metadata sprinkled in - as per the HLS specification, players are expected to ignore statements they don’t understand. Let’s look at the same set of areas we looked at for Amazon on Twitch’s side.

Packaging: HLS, H.264 encoded in 2 second fMP4 fragments

From the master manifest alone we can tell which codecs that Twitch is using for delivery - In the #EXT-X-STREAM-INF CODECS field, we can see the same combination of codecs we saw on Amazon Prime - H.264 and AAC. Twitch is delivering 6 renditions from 160p, up to 720p 60fps. This is fairly close to what we saw on Amazon earlier. However, in Twitch’s case the top bitrate is 6.6Mbps, but at a higher frame rate. This may be preferable for high motion sports content. It's also worth noting that Twitch goes to a lower bottom bitrate and resolution than Amazon, which implies that Twitch is catering more actively to users on cellular or low performance internet connections.

Because Twitch is using HLS, we need to perform an extra step to get any information about the exact packaging used. As we explained in our HLS blog post, HLS uses several manifests - one master manifest which lists all the available renditions, and then one manifest for the segments within each rendition. So let’s take a look at one of the rendition manifests - we can just pull the URL from the master manifest and pull it down.

Here’s where it gets interesting. The rendition manifest delivered by Twitch contains the HLS declared version of 6 (#EXT-X-VERSION:6) which immediately implies that Twitch is using some modern and interesting features of HLS - and they are. We see Twitch using the #EXT-X-MAP:URI to point to an fMP4 initialization segment - this approach was only included in recent versions of the HLS specification. We can also look down the manifest to see that all the segment URLs point to .mp4 fragments.

This differs dramatically from Twitch’s usual strategy - Twitch has been a long time user of the more traditional Transport Stream segment packaging format (.ts). But does this new approach point to a fundamental change in strategy for Twitch or is there some more obvious reason for this change?

It turns out the answer is actually fairly simple - Twitch seems to be DRM-ing the Thursday Night Football streams. To my knowledge, this is the first time Twitch has DRM’d content on their platform. I’ve been keeping an eye on the TwitchPresents channel since I started researching this subject and I don’t see DRM being used on any of the Pokemon or Bob Ross episodes being shown on there. I assume that a requirement for DRM was stipulated on the contract for Thursday Night Football.

Thankfully in HLS we don’t need to do any math to get the duration of the media segments - we have that information neatly contained right above each media segment in the manifest. In this case we can see each segment is preceded by #EXTINF:2.002, which indicates the segments are a little over 2 seconds long:

#EXT-X-PROGRAM-DATE-TIME:2018-10-26T03:06:27.559Z

#EXTINF:2.002,live

https://video-edge-a242e4.sjc02.abs.hls.ttvnw.net/v1/segment/LONGTEXT.mp4DRM: CENC Encryption

So how can we tell that Twitch is using DRM on their fMP4 HLS streams? Well, we again need to get our Bento MP4 dumping tools out. We can take the initialization URL declared in the rendition manifest and download it to look at the data contained within it.

This time we’re going to dump the file and look for the pssh boxes which declare the available DRM technologies that can be used to decrypt the file. In HLS the data about the encryption of the content has to be embedded in the media because there is only a specification for delivering FairPlay DRM information in the manifest file.

mp4dump --verbosity 3 twitch-init.mp4

// Trimmed for space saving

[pssh] size=12+75

system_id = [ed ef 8b a9 79 d6 4a ce a3 c8 27 dc d5 1d 21 ed]

data_size = 55

data = [...]

[pssh] size=12+966

system_id = [9a 04 f0 79 98 40 42 86 ab 92 e6 5b e0 88 5f 95]

data_size = 946

data = [...]If we look closely at these system_ids, we’ll notice that they’re the same as the UUIDs which we saw up in the ContentProtection blocks in the DASH manifest for the Amazon Prime stream. This allows us to deduce that Twitch is also using Playready and Widevine to protect their desktop streams.

Ad Insertion: Twitch Weaver

Twitch’s stream also had ads that weren’t the adverts going out on the broadcast. By looking at the master manifest we can see that the rendition manifest URLs being used are pointing at something called “Weaver” https://video-weaver.sjc02.hls.ttvnw.net.

As we learned a couple of weeks ago at Demuxed, Weaver is Twitch’s HLS Ad insertion service which stitches ads into the stream by declaring discontinuities in the playlist and inserting segments of Ad content. This approach is fairly standard in the industry and is much more simple than using multi-period DASH.

CDN: Twitch CDN (Probably)

Now here’s where things start to get a little more woolly. If we attempt to reproduce our last approach for working out what CDN Twitch is using we come up blank. Looking at the URLs that the segments are coming from for Twitch we get the hostname video-edge-a242e4.sjc02.abs.hls.ttvnw.net - this really doesn’t help us.

However, it's fairly well known in the industry that Twitch runs its own CDN - I checked other streams coming off Twitch and they seem to be coming from similar hostnames within the same IP ranges as the one I recorded while watching Thursday Night Football. Reverse DNS lookups and IP WHOIS lookups don’t show anything particularly interesting, just that the IP ranges are owned by Amazon/Twitch.

Video Encoder: Twitch’s Encoder (Probably)

Trying to figure out the encoder that Twitch is using is also challenging. First we can try using the same approach we used earlier to dump out the contents of the hdlr box, but it unfortunately gives us a very generic answer:

mp4dump --verbosity 3 twitch-init.mp4

[hdlr] size=12+33

handler_type = vide

handler_name = VideoHandlerWhat we can do however is make assumptions based on talks that Twitch employees have given publically. At Streaming Media East last year, Yueshi Shen and Ivan Marcin gave an excellent talk about Twitch’s previous and next generation transcode architecture. In this talk Yueshi talked about how their new architecture is built around Intel’s Quick Sync based on a combination of cost, stability, and visual quality. I think the best we can do is assume that Twitch is using their usual Quick Sync encoder chain for their video encoding approach.

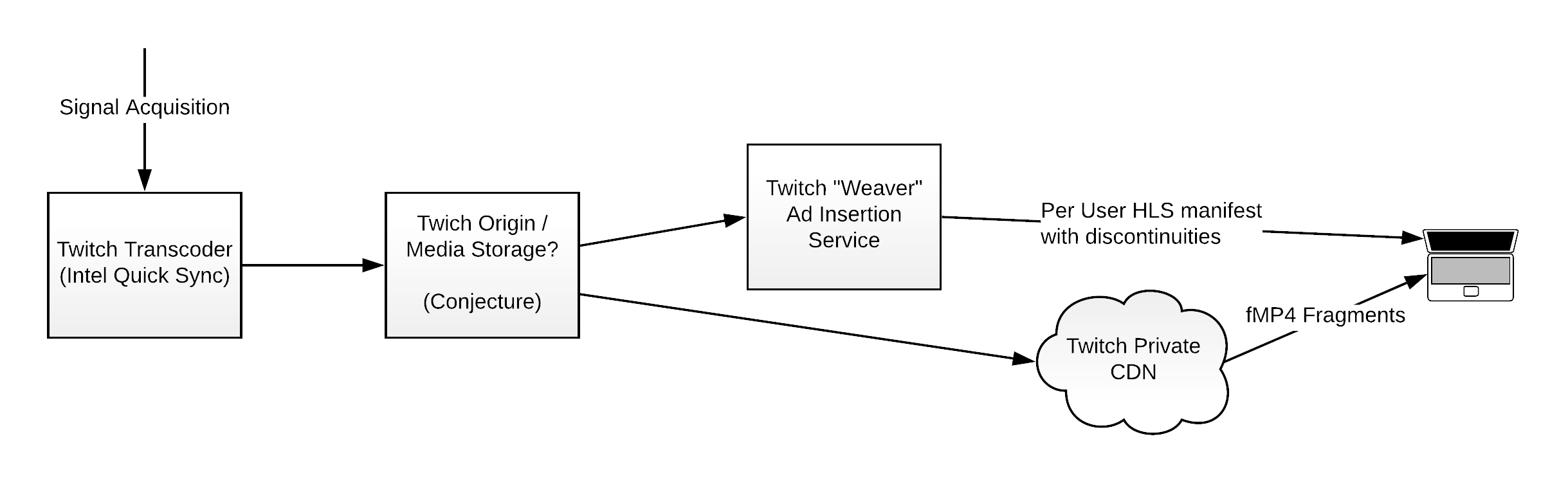

Twitch - Hypothetical Architecture

Warning: More conjecture ahead.

At this stage we’ve pretty much established as much as we’re able to without gaining internal knowledge of how Twitch is architected. Again I’ve put together a theoretical architecture diagram for how I think this is laid out internally.

In this case the commentary I’d provide is that everything in this case is really Twitch proprietary software which isn’t hugely shocking, but it's pretty safe to say that Twitch’s approach brings its own advantages when it comes to latency as I’ll comment on in the next section.

User Experience

I’d feel bad if I didn’t at least mention the end user experience. From an end user perspective the experience is quite consistent and comparable between the two services. For me - at least on a fairly stable internet connection - the video streamed smoothly with no buffering or visual quality issues on either platform.

However, there are a couple of significantly different end-user experiences between the two platforms I wanted to highlight.

Latency

While watching the game it was obvious that the Twitch stream was significantly ahead of the Amazon Prime Video stream. Unfortunately, in our experiments we didn’t have access to a cable stream to verify the difference to a traditional broadcast so I can’t give an accurate estimate to how far behind wall clock we’re talking about, but I can give some comparative figures.

To test the comparative latency I refreshed both streams a couple of times, gave the streams time to settle and then took a visual marker on the Twitch stream, started my stopwatch, and waited for the Amazon Prime stream to catch up to the same visual mark.

The difference between the streams was fairly striking. On average the Twitch stream was 12 seconds ahead of the Amazon Prime stream. On some attempts the difference was as little as 10 seconds and on other attempts as large as 16 seconds.

This is super interesting. As Yueshi described in his talk at Streaming Media East this year, Twitch has worked extensively over the last few years to bring down the latency of their product because in the traditional Twitch use case, streamers like to have good interactivity with their viewers. This culminated in Twitch making their low-latency mode available to all streamers in May of this year. The target of this project was to get latency into the 5 second territory.

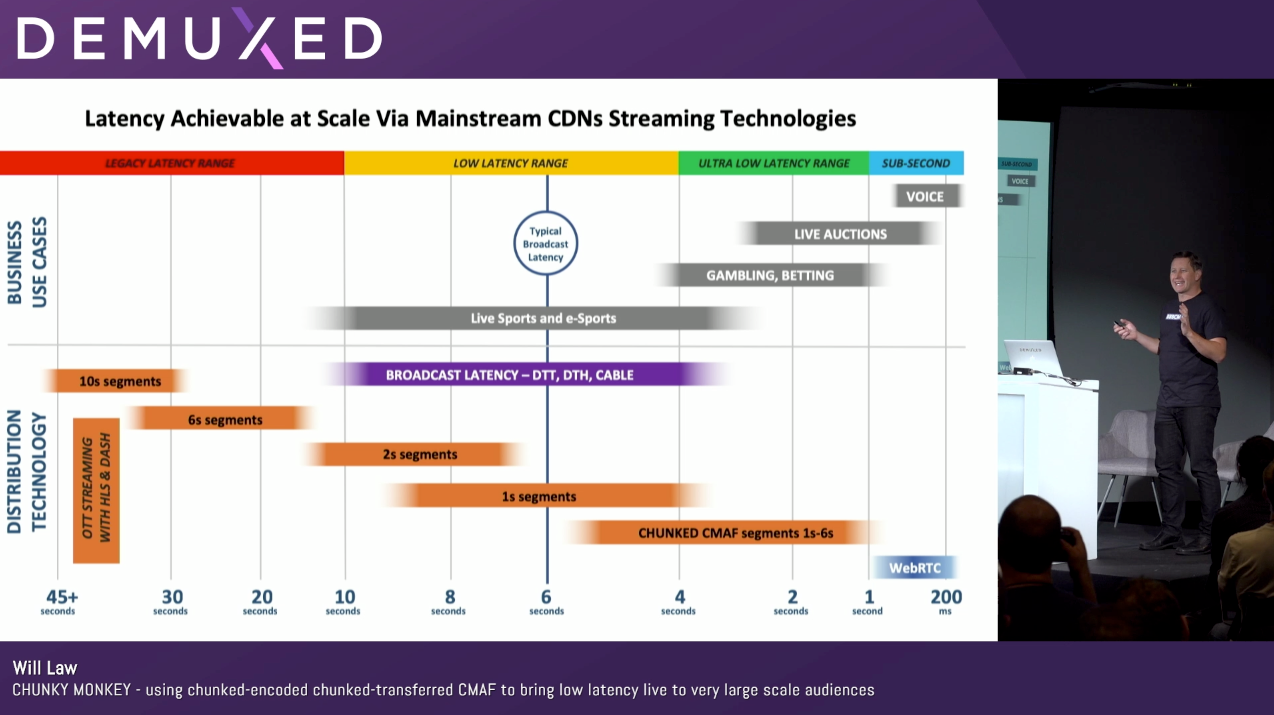

If we look at Will Law from Akamai’s definitions of “Low Latency Streaming” from Demuxed earlier this year we can see roughly where Twitch and Amazon are now falling on the scale.

For now let’s say that Amazon is around 10-15 seconds of latency to wallclock and Twitch is around 5 seconds. Will would describe Amazon as solidly in the “Legacy Latency Range” while Twitch is right at the leading edge of the “Low Latency Range”.

In this particular case Twitch is a long way ahead and Amazon have some catching up to do.

Platform coverage

I also wanted to mention in passing one other thing I noticed during researching this article. It seems that Twitch’s addition of DRM for Thursday Night Football appears to have had an impact on the platforms that the stream is available on.

As Twitch points out on its own blog the streams are “available on web and mobile apps” - this means that a good pile of the platforms that Twitch has traditionally reached (including Chromecast, PS4, and XBox One) do not currently support their Thursday Night Football streams. This is in stark contrast to Amazon Prime Video’s platform where the live streams seem to work everywhere that Amazon has a Prime Video app.

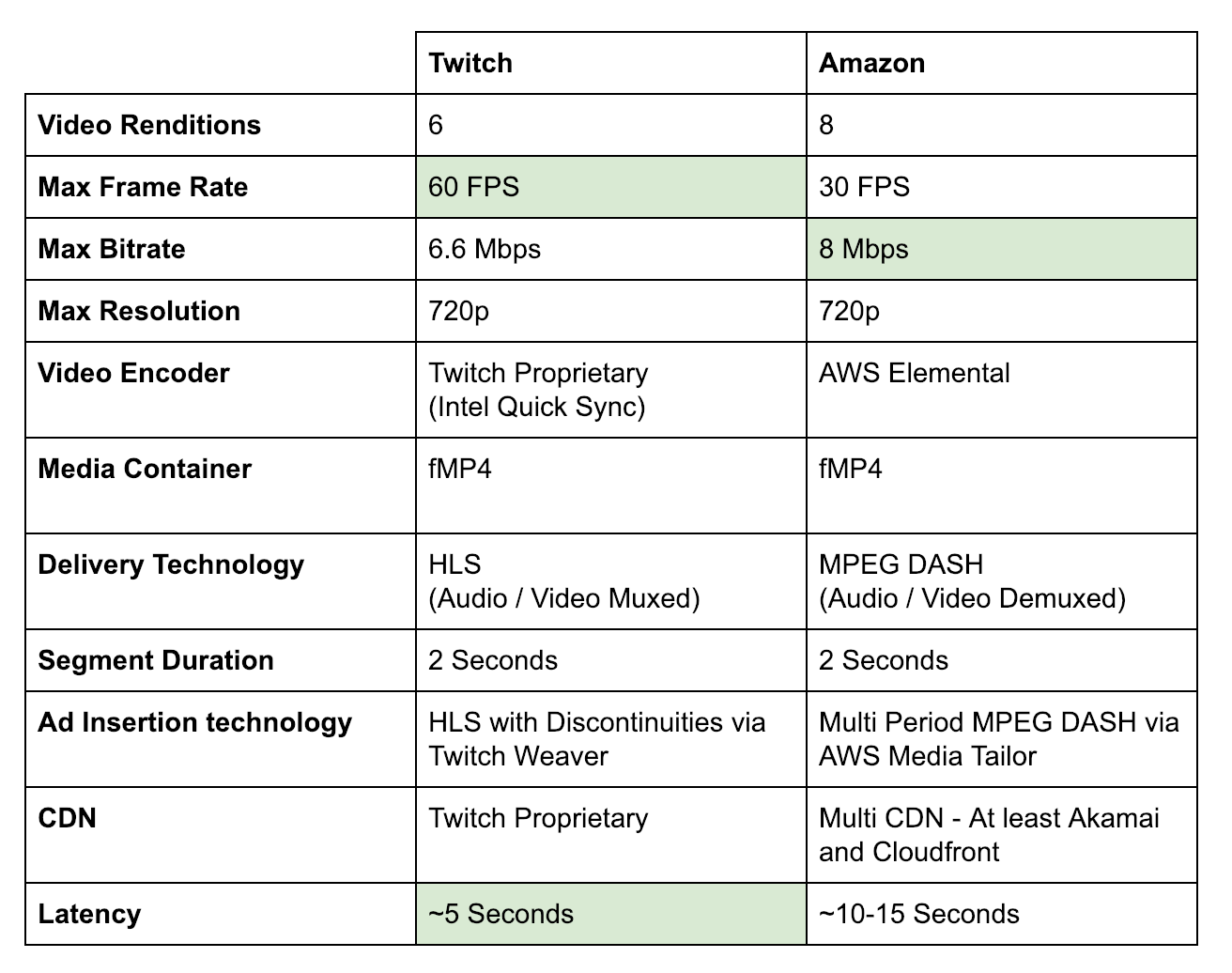

Summary

Wow, that was a lot of reading! Congratulations for making it this far! Given how much we’ve gone through I’ve put together a summary of the key technical details of each implementation below:

Author’s note: This data only applies to the video delivered to desktop browsers. It’s likely other technologies are in use for some native devices, in particular iOS apps.

Now for a little commentary. At the building block level the architectures actually look very different, but really the same fundamental approaches are being used here, even though the minutia of the technology stacks is different.

Both approaches use H.264 with AAC, both use 2 second fMP4 fragments protected with Widevine and Playready, both use manifest manipulation based SSAI insertion strategies. However, Twitch’s in house encoding, CDN, and packaging architecture enables them to deliver a much lower latency stream, and also at a higher frame rate. Amazon, however, has the advantage of a notably higher top bitrate and a more comprehensive device footprint.

While Amazon’s approach is very much making use of AWS Elemental’s products, it’s also a great reference architecture for them - they can go to market with the AWS Elemental suite of products and say “Hey, it works for us with Thursday Night Football” and that’s really valuable in the high-end live streaming marketplace.

One final thought. After spending nearly a billion dollars on Twitch, if Twitch’s approach seems to deliver the same quality of experience with significantly lower viewer latency, which is critical for live sports, why isn’t Amazon using it for their Prime Video streams?