Updated 2024-07-29: Unfortunately, AVflow.io is no longer in business. Read more about building content moderation with Hive.

Content moderation and why it matters

Last year we talked about (gasp!) the dirty little secret that no one talks about: you either die an MVP or live long enough to build a content moderation system. You liked that blog post so much that it became one of our most popular posts ever. Deft writing plus Dark Knight references — what’s not to like? However, it may also be that you, too, have content moderation concerns. Fear not; in this follow-up post, we will show you how to implement a content moderation system into your application in five minutes…without code…Seriously. 😮

Implementing the Robocops

Dealing with abusive users and thinking about content moderation systems may be the last thing you want to spend your time on, but it is a necessary evil. Bad actors are everywhere, and it’s only a matter of time before they find their way to your rapidly growing product.

We on the partnerships team at Mux want to make it easier to add Artificial Intelligence (AI) powered content moderation to your application. Our partner AVflow makes it simple to build a low-code workflow that adds content analysis and safety detection from Hive to your Mux video processing and streaming.

A bit about the tools

- AVflow is the low/no-code video workflow builder for video; think ‘Zapier for video dev.’

- Hive automates content moderation by using AI to identify NSFW (violent, suggestive, etc.) content in images, video, and audio.

- And Mux — well, we’re your API for video infrastructure.

We will use Hive to analyze the videos, AVflow to build out the logic of the workflow using their drag-and-drop GUI, and Mux to ingest, encode, store, and deliver your videos at scale, to all devices, and with the highest quality.

How to do it

Getting started

You will need accounts with AVflow, Mux, Hive, and, for this example, AWS S3 for storage. Don’t worry, it’s free to get started with all of these services.

Once you have these accounts, the easiest way to get started is to:

- Clone this Flow into your AVflow workspace

- Enter your own API keys for Mux and Hive and configure AWS S3 bucket permissions, and…

- Turn the Flow on!

Step by step

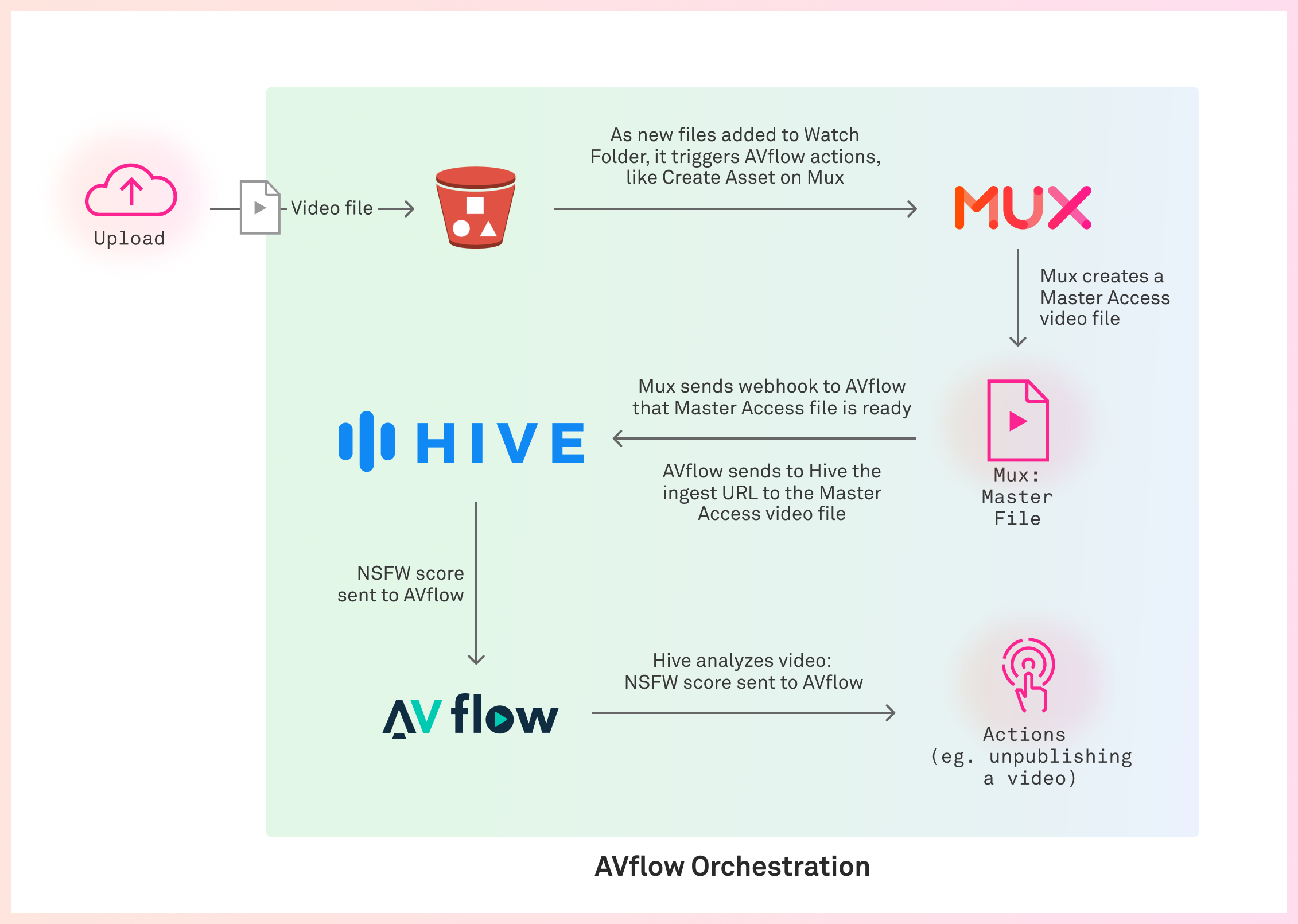

Here’s how it works:

This guide assumes that you’ve already built the means for end-users to upload a video through your website or application (i.e., user-generated content) and that you are storing this uploaded video in S3. Once the videos are stored, these are the steps that will follow:

- The Flow is triggered in AVflow once the video is saved in S3.

- An Asset is created in Mux along with a setting to enable Master Access.

- The master video is sent to Hive for analysis.

- Hive returns a JSON payload with the results of their findings.

- Hive’s results are processed by AVflow to see if the video meets your defined threshold for NSFW (e.g., 90%).

- You decide what action to take with any videos in violation.

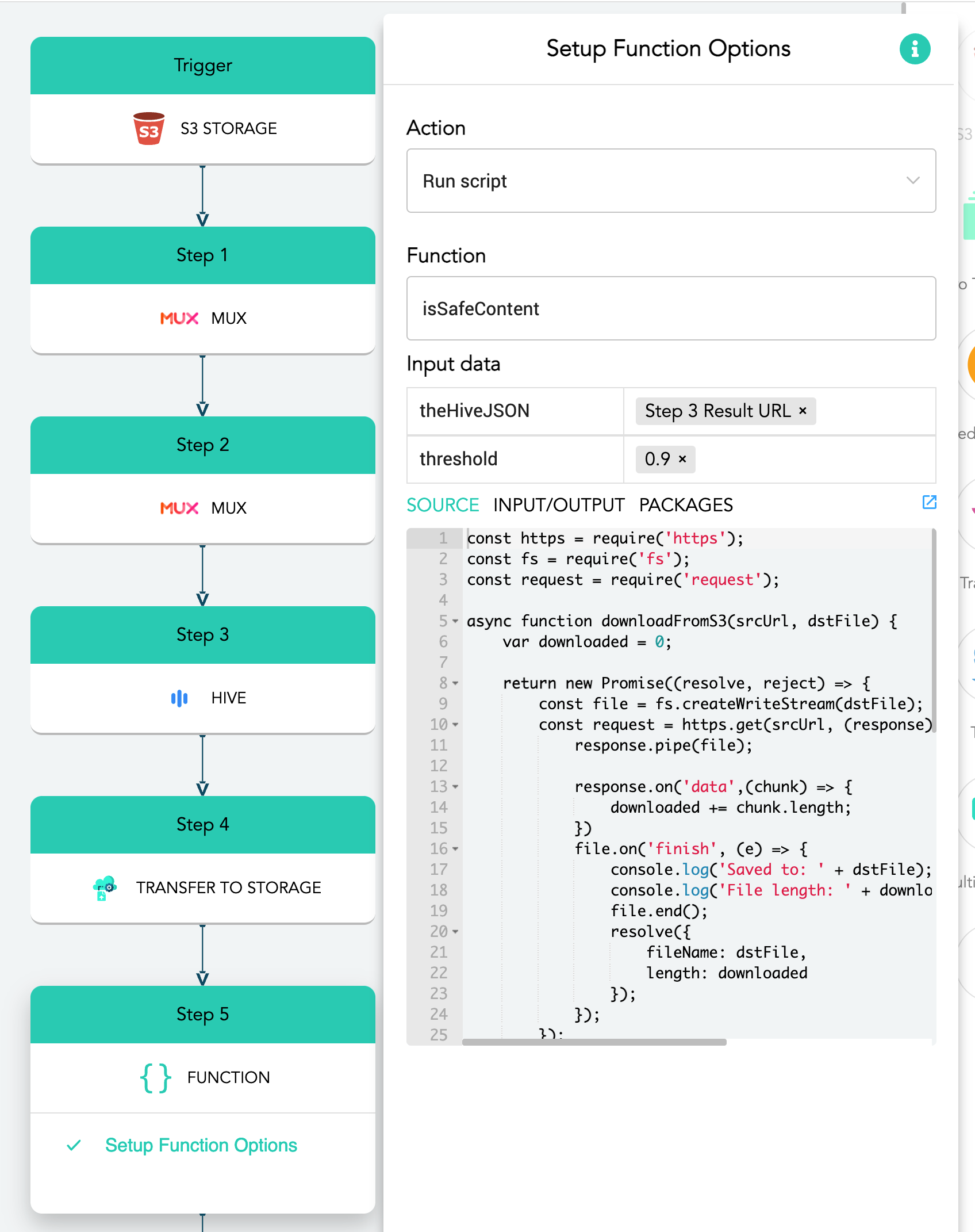

AVflow lets you drag and drop APIs, hooks, and services into your desired, ordered flow.

Trigger the Flow from the S3 upload or a webhook

Add the S3 icon to set the Flow trigger:

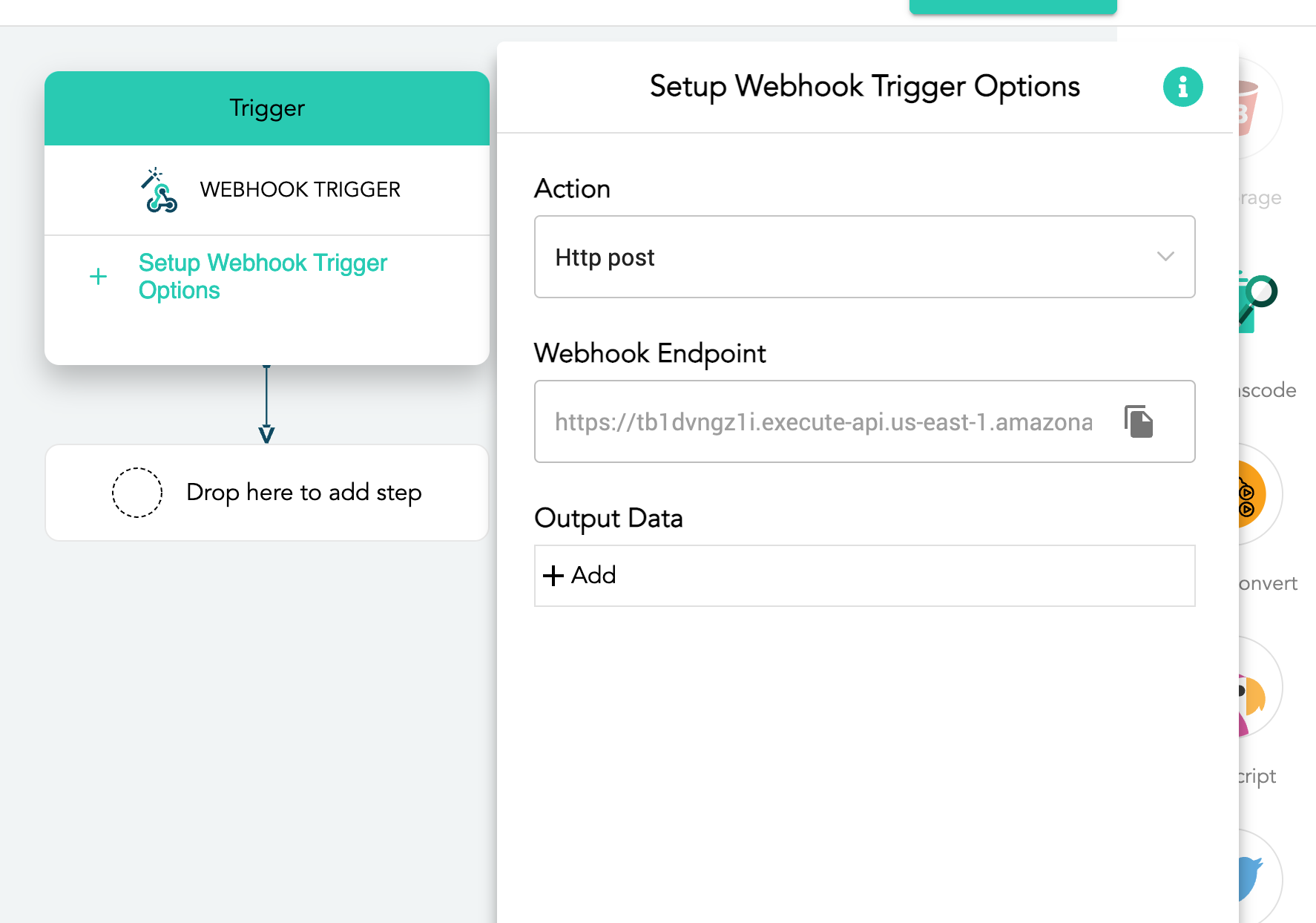

You can also use a webhook from your app to trigger the Flow:

Step 1 of the Flow

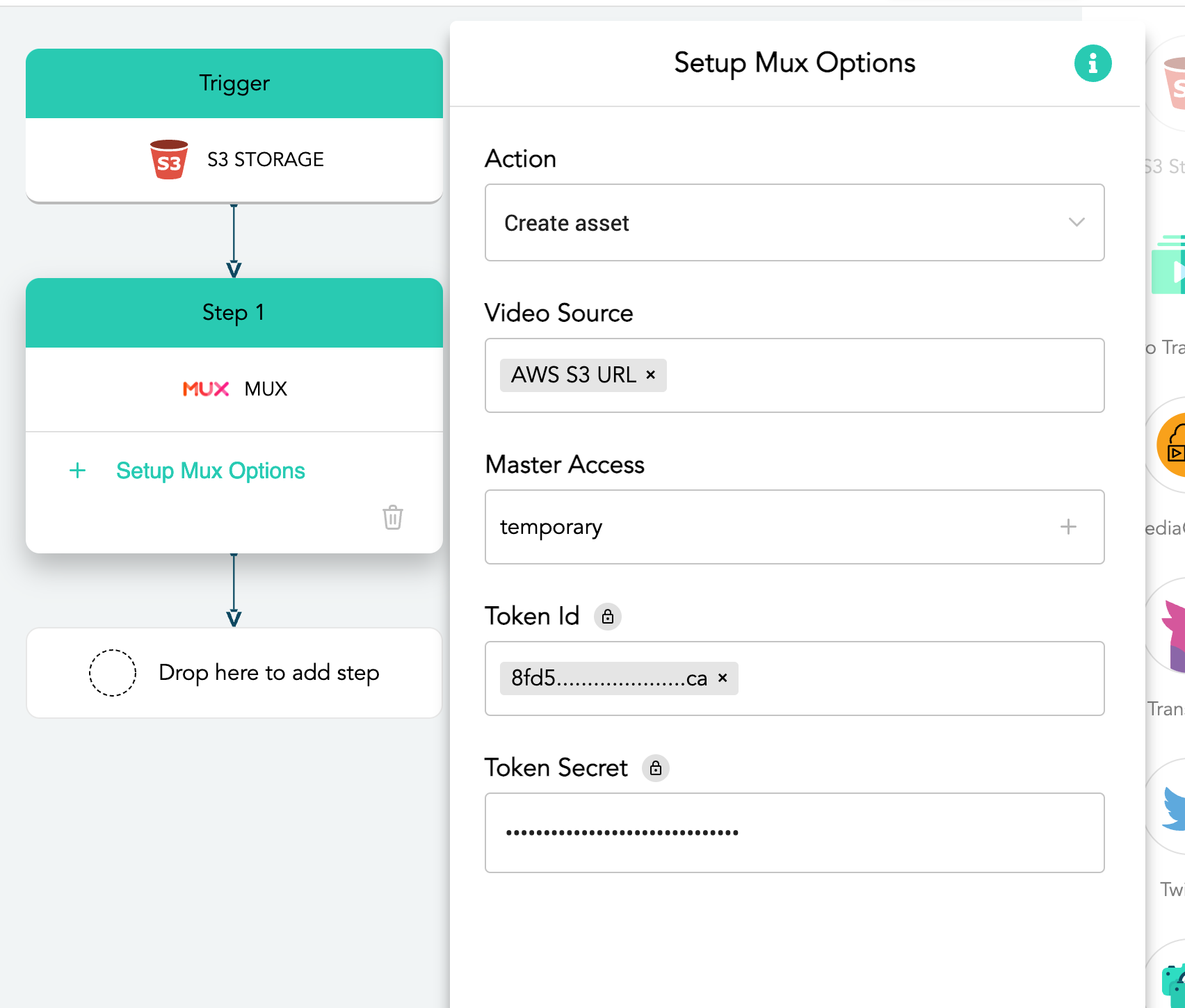

Add the Mux icon to enable Asset creation from Mux from the uploaded video. You want to turn on Master Access by entering the value “temporary” in the field. Master Access is where Mux generates a high-resolution MP4, which we will send to Hive to analyze in a subsequent step.

Step 2 of the Flow

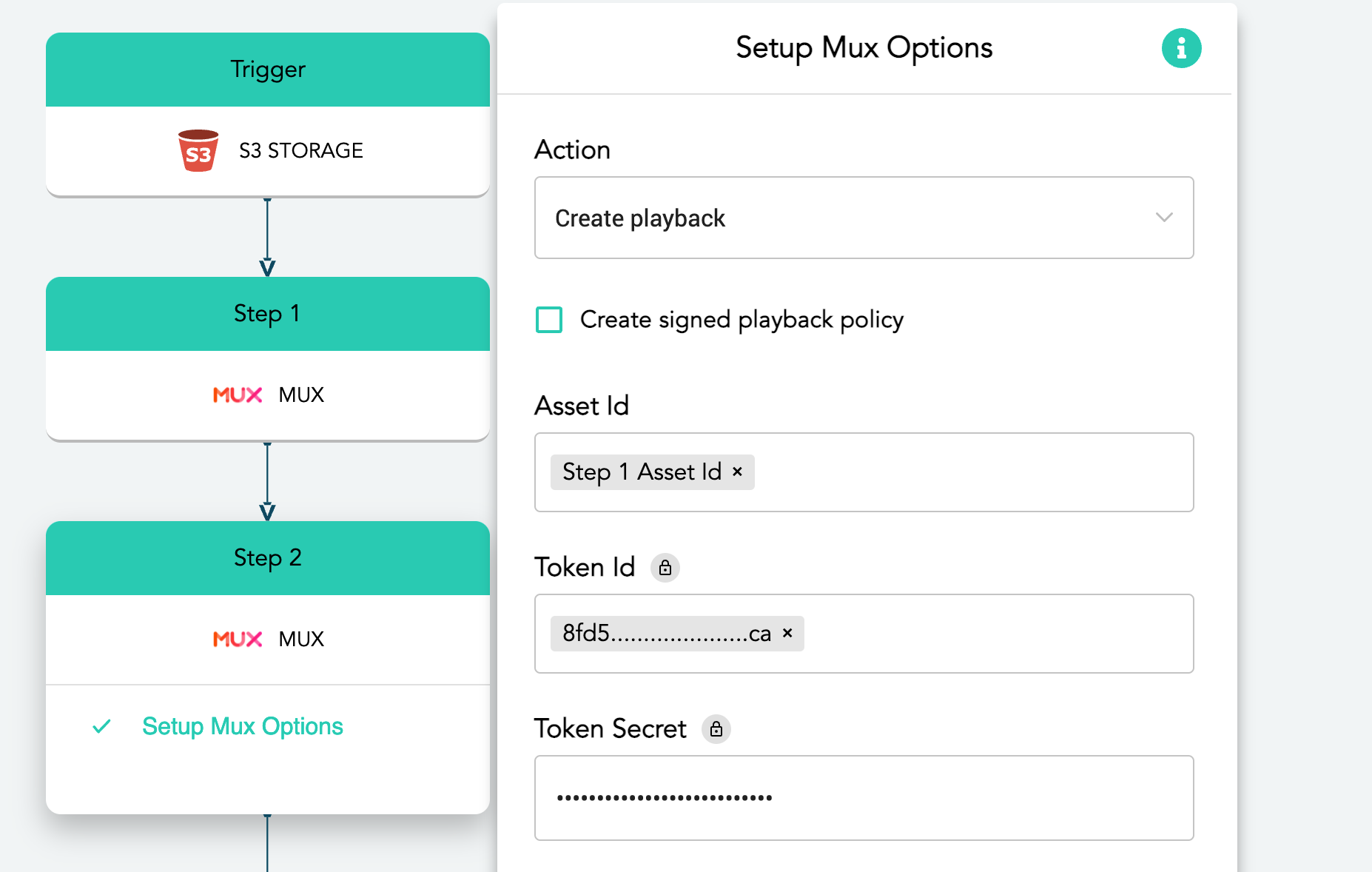

Add the Mux icon for Step 2 as well, since we need the action “Create playback.”

Step 3 of the Flow

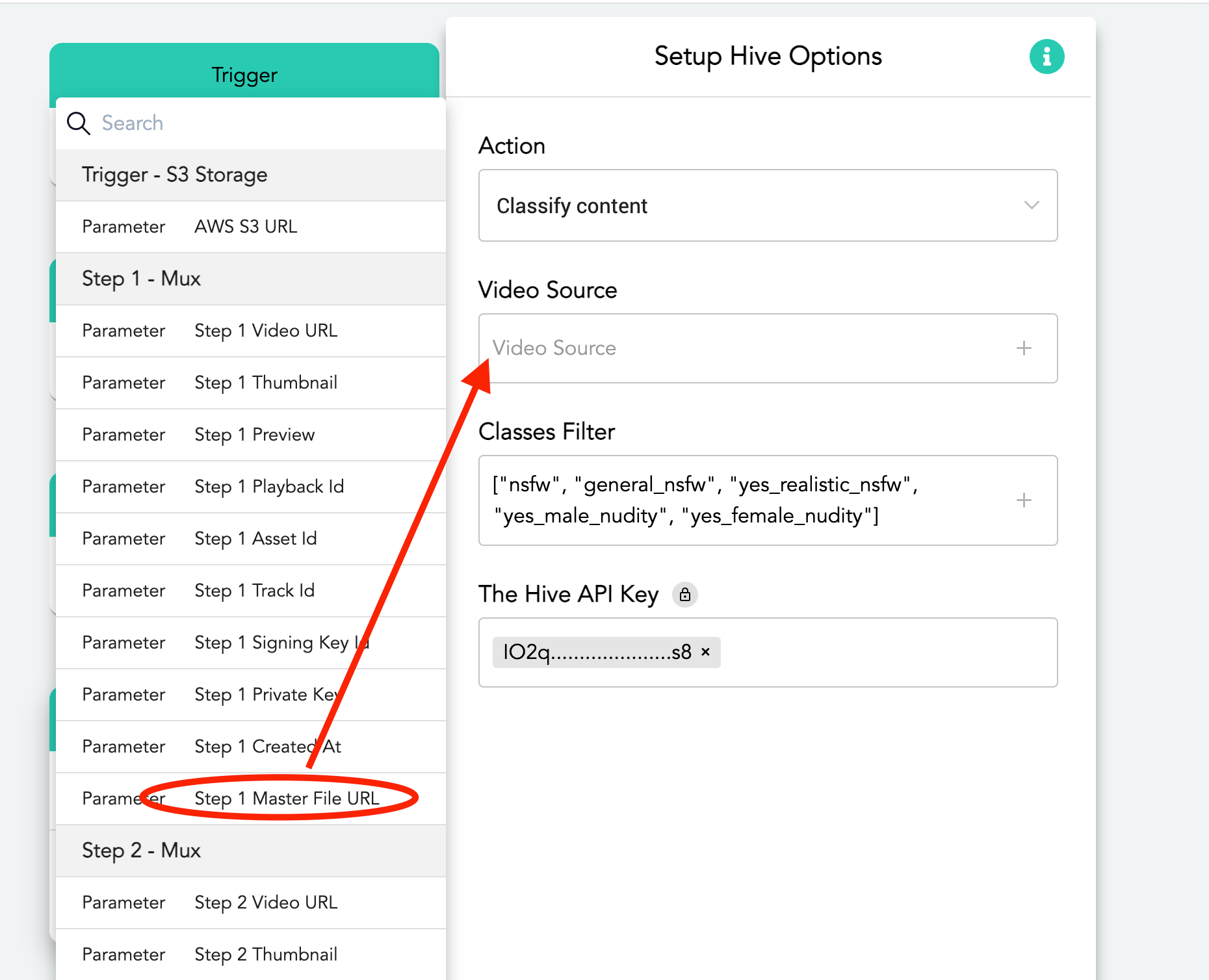

Drop in the Hive icon and select the action “Classify content.” For the Video Source field, click the ➕ icon and then choose “Master File URL” from Step 1. Choose the types of classes you want Hive to analyze for, such as “nsfw.”

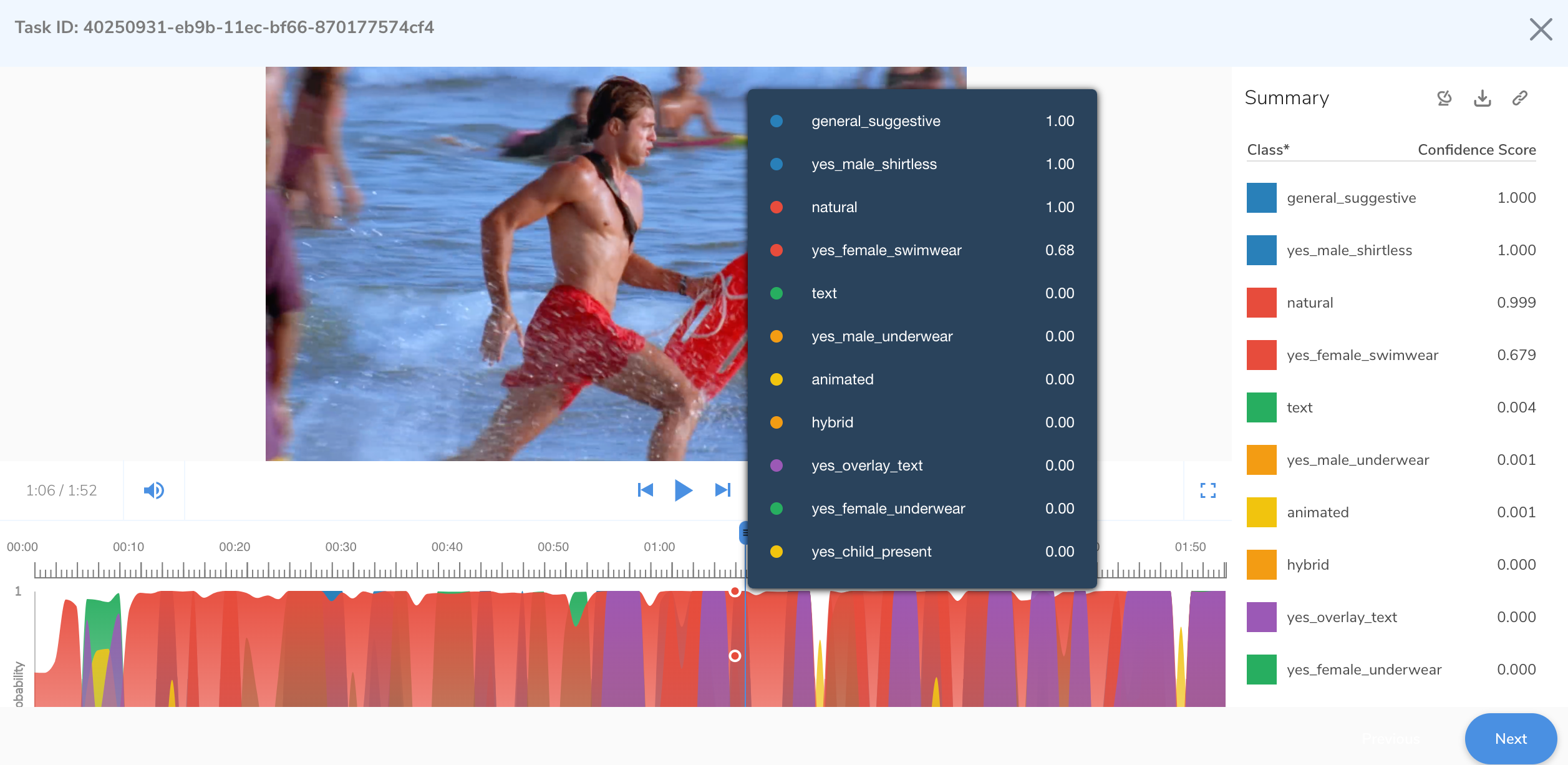

Hive will extract sample images from the MP4 and analyze each thumbnail, providing the results as a JSON payload.

For example: If your user uploaded the opening sequence to “Baywatch,” Hive would extract and analyze the thumbnails, and it would look something like this in your account in Hive:

Step 4 of the Flow

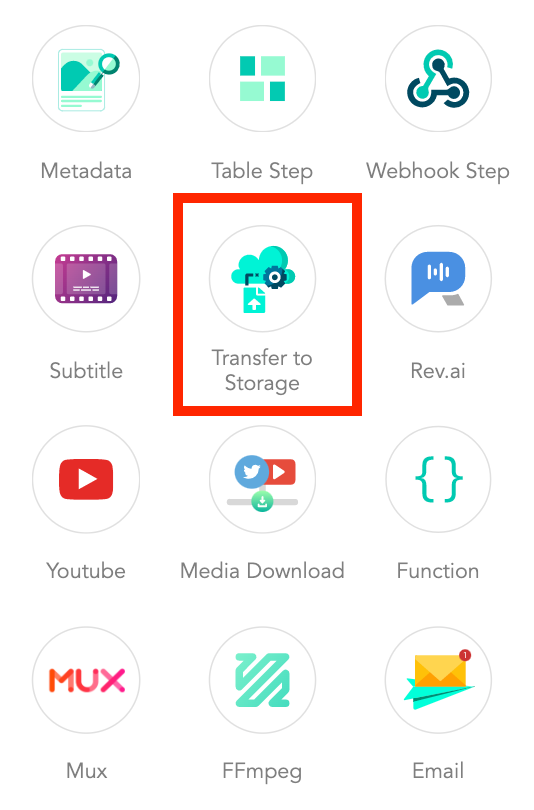

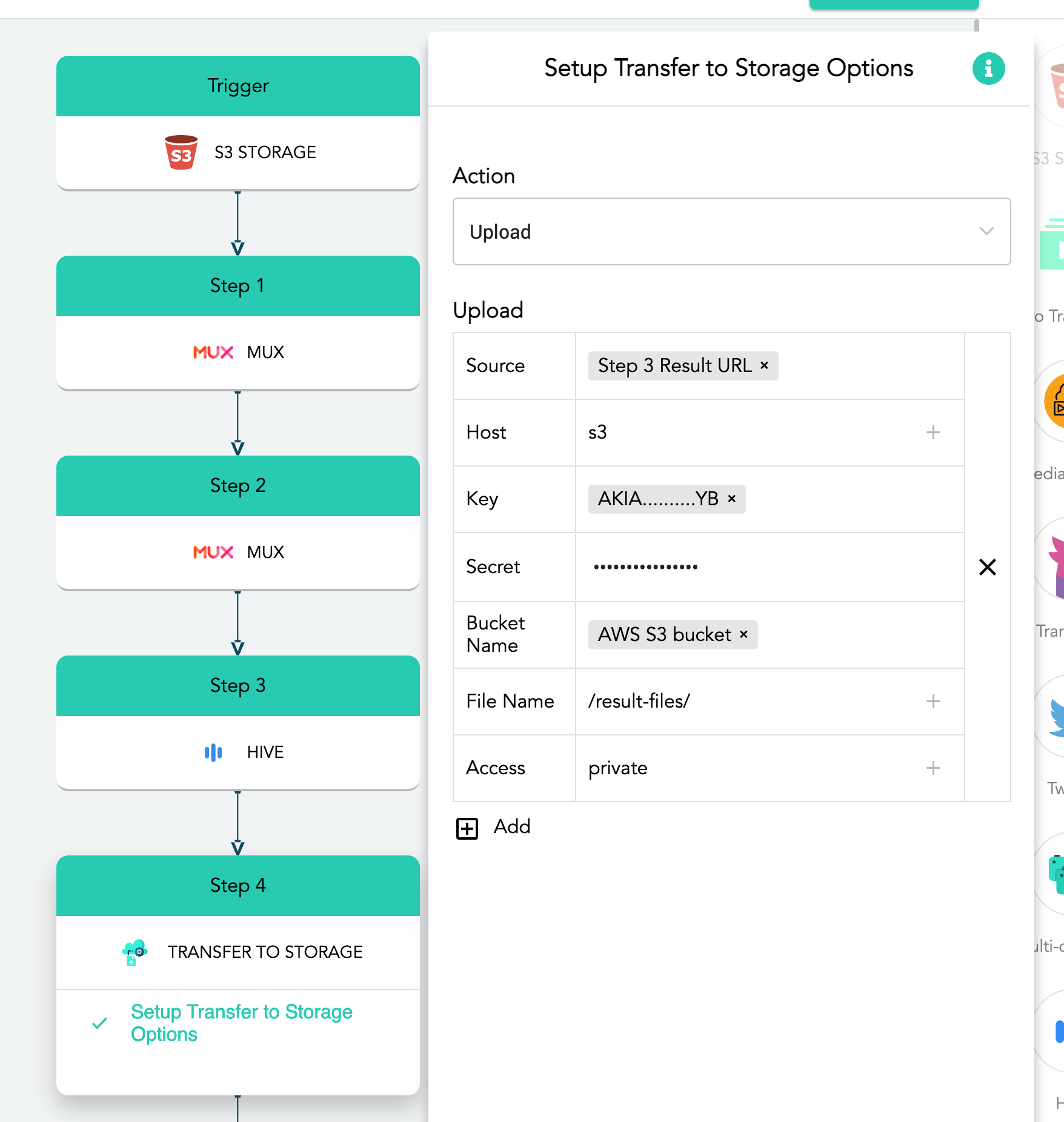

The results are sent back as JSON data to AVflow, where they need to be saved to your AWS S3 bucket with the “Transfer to Storage” step.

Step 5 of the Flow

AVflow has a nifty feature called Functions, where it can run code as a step in a Flow. AVflow provides a sample script that parses the JSON results from Hive to determine whether a video should be categorized as safe or not safe based on a threshold you set. Generally, the 0.9 threshold works well whereby, if Hive flags any video with a probability of 90% or greater for a class you asked it to scan, then that video should be considered not safe. You may choose to lower the threshold if your platform has a low risk tolerance and it’s more important for you to prevent false negatives than risk blocking content that’s actually safe.

You’ll add this function as a step in your Flow, like this:

Note how you can determine your own probability in the threshold field in the “Input data” section.

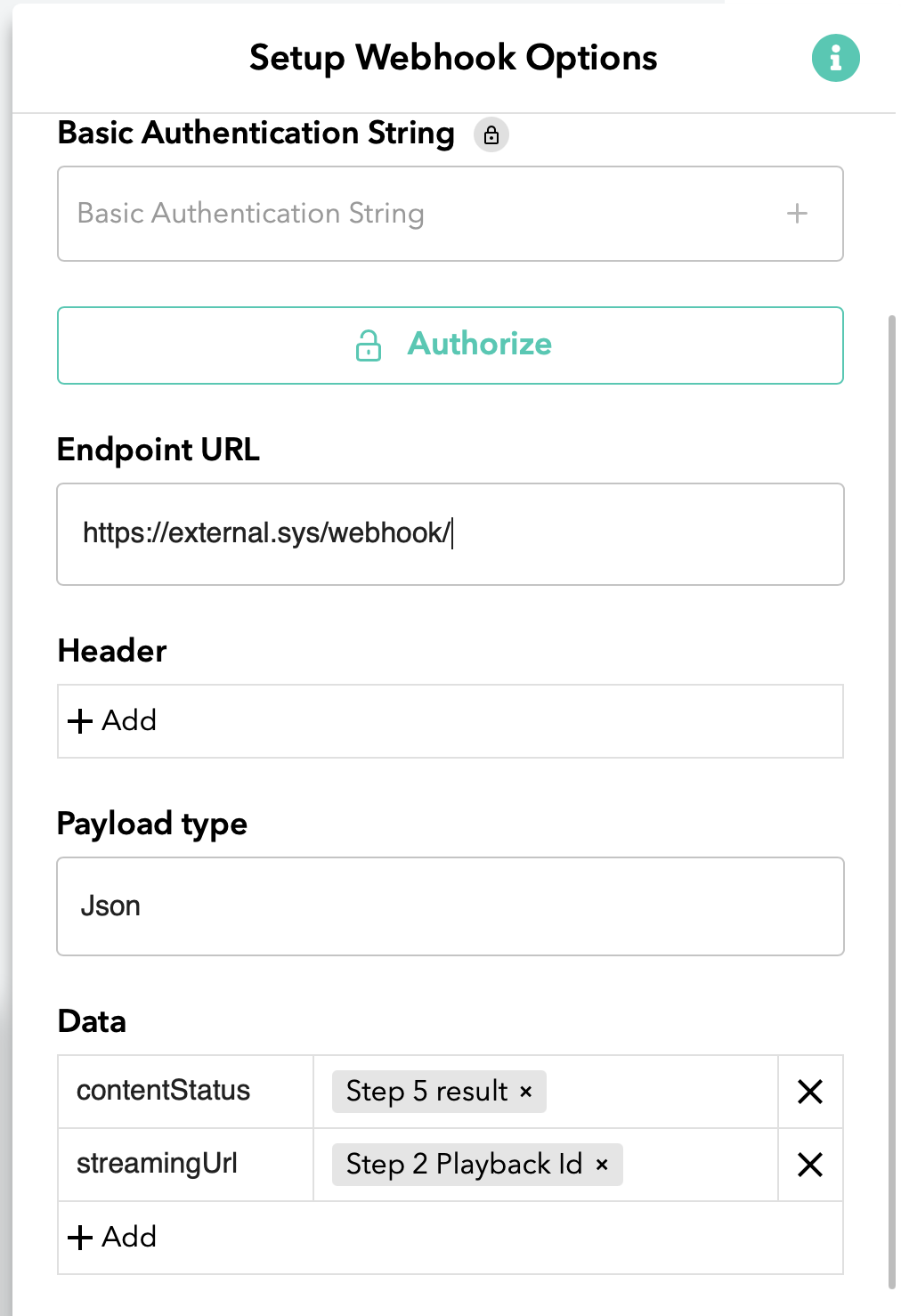

Taking action

There are a variety of options you may choose to take on the content that falls into your not safe category. Maybe you want to queue it for a human moderator to review, unpublish it in your content management system, ban the contributor from your app, or even delete the Mux playback ID. To accomplish whatever action you want to take, you can add a webhook as a final step that will send the binary output of the function — safe or not safe — back to your application, which can then handle this event in any way you see fit.

Conclusion

If you are a Mux customer and you have a platform that allows user-generated content, we hope this post illustrates how, using AVflow and Hive, you can quickly add content moderation to your application in a few minutes without writing code. You can adjust the thresholds (in the AVflow script) per Hive category to best suit your needs and requirements. Although this post focuses on NSFW video analysis, you may wish to add OCR-based text analysis, or audio analysis too. Check out Hive’s Content Moderation Dashboard for an even more turnkey approach.

As your UGC platform grows, bad actors will get ever more creative in trying to circumvent your detection tools; inevitably, your solutions will need to evolve too. Hopefully our post will help you get started building a content moderation system quickly, easily, and flexibly; we can’t wait to see what you do with it! Want to learn more about how Mux and Hive work together to solve content moderation problems? Watch this online event of Mux’s @Dylan Jhaveri and Hive’s Max Chang as they discuss the art of the possible along with pragmatic approaches to content moderation.